DeepSeek V4 has the rare smell of a real engineering release, not a keynote pretending to be one. The headline number is silly in the best possible way, 1.6 trillion total parameters for Pro. The more useful number is 49 billion active parameters, because that is what you actually pay for on each token. The fun part is that DeepSeek V4 is not only bigger. It is trying to make long-context reasoning less like towing a cruise ship through molasses. One million tokens, sparse compression, cheaper inference, open weights, and enough benchmark swagger to make the closed-model crowd check the locks twice.

This review cuts through the whale noises. We’ll look at the architecture, the benchmark table, DeepSeek API pricing, the Nvidia DeepSeek hardware angle, DeepSeek local requirements, and what the release means for builders who want frontier-grade intelligence without renting their entire soul to a proprietary endpoint.

Table of Contents

1. DeepSeek V4 Snapshot: The Numbers That Matter

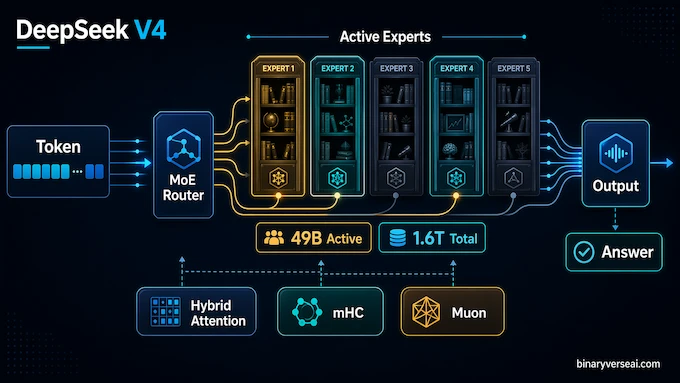

The technical report introduces two Mixture-of-Experts models: DeepSeek-V4-Pro with 1.6T total parameters and 49B activated, and DeepSeek-V4-Flash with 284B total parameters and 13B activated. Both support a one-million-token context window, and the model card lists mixed FP4 and FP8 precision for the instruct checkpoints. (Hugging Face)

| Model | Total Parameters | Activated Parameters | Context Window | Practical Role |

|---|---|---|---|---|

| DeepSeek-V4-Flash | 284B | 13B | 1M Tokens | Cheap, fast, high-volume workloads |

| DeepSeek-V4-Pro | 1.6T | 49B | 1M Tokens | Hard reasoning, coding, agentic work |

| DeepSeek-V4-Pro-Max | 1.6T | 49B | 1M Tokens | Maximum reasoning effort mode |

| DeepSeek-V4-Flash-Max | 284B | 13B | 1M Tokens | Smaller model with extra thinking budget |

The second table is the one most people came for. The available comparison row uses Claude Opus 4.6 Max, GPT-5.4 xHigh, Gemini-3.1-Pro High, K2.6 Thinking, and GLM-5.1 Thinking. Readers searching for DeepSeek V4 vs Claude Opus 4.7 are really asking whether DeepSeek has entered the frontier room. The answer is yes, with a few caveats.

| Benchmark | Claude Opus 4.6 Max | GPT-5.4 xHigh | Gemini-3.1-Pro High | DeepSeek-V4-Pro-Max |

|---|---|---|---|---|

| MMLU-Pro | 89.1 | 87.5 | 91.0 | 87.5 |

| SimpleQA-Verified | 46.2 | 45.3 | 75.6 | 57.9 |

| GPQA Diamond | 91.3 | 93.0 | 94.3 | 90.1 |

| HLE | 40.0 | 39.8 | 44.4 | 37.7 |

| LiveCodeBench | 88.8 | Not Listed | 91.7 | 93.5 |

| Codeforces Rating | Not Listed | 3168 | 3052 | 3206 |

| Terminal Bench 2.0 | 65.4 | 75.1 | 68.5 | 67.9 |

| SWE Verified | 80.8 | Not Listed | 80.6 | 80.6 |

| Toolathlon | 47.2 | 54.6 | 48.8 | 51.8 |

That table says something precise. DeepSeek V4 is not sweeping every frontier benchmark, and anyone pretending otherwise is selling confetti. It does look genuinely elite on coding, long context, and open-weight reasoning. A 3206 Codeforces rating and 80.6 on SWE Verified are not “nice for an open model” numbers. They are “please stop breaking my pricing model” numbers.

2. DeepSeek V4 Architecture: Why The Whale Does Not Move Like One

The trick is MoE. A dense 1.6T model would be hilariously expensive to run, the kind of thing that turns a GPU cluster into a space heater with invoices. DeepSeekMoE routes each token through only part of the network, so Pro has 1.6T parameters available but activates 49B at inference. Flash does the same at a smaller scale, 284B total and 13B active.

This is not just parameter accounting. MoE changes the economic shape of the model. You get a huge library of specialized capacity, but each token checks out only the books it needs. That is why a trillion-parameter model can be discussed as a product rather than a national infrastructure project.

The report also keeps Multi-Token Prediction from the V3 line, then adds three upgrades: hybrid attention, Manifold-Constrained Hyper-Connections, and the Muon optimizer. The first attacks long-context cost. The second stabilizes deep residual pathways. The third helps training converge faster and more cleanly. Less glamorous than a benchmark tweet, but this is where the bodies are buried in modern model building.

The post-training recipe is also revealing. The team did not try to make one model learn every behavior in one undifferentiated soup. They trained domain specialists for math, coding, agents, and instruction following, then consolidated those skills through on-policy distillation. That is a very modern pattern: specialize first, compress the useful behavior back into one general model later. It is less romantic than “scale fixes everything,” but it is closer to how high-performance systems are actually built. You build sharp tools, then teach the toolbox to remember where each one belongs.

Manifold-Constrained Hyper-Connections sound like a phrase invented to humble podcast hosts, but the intuition is clean. Deep networks pass signals through many layers, and residual connections are the plumbing that keeps those signals from degrading. mHC makes that plumbing more stable by constraining how residual information mixes across expanded streams. In plain English, it gives the model more room to route information while trying not to let the routing explode numerically. That is not a flashy consumer feature. It is the kind of thing you add when you are scaling a monster and would prefer it not to eat the lab.

3. The One-Million Token Context Trick: Compression Before Brute Force

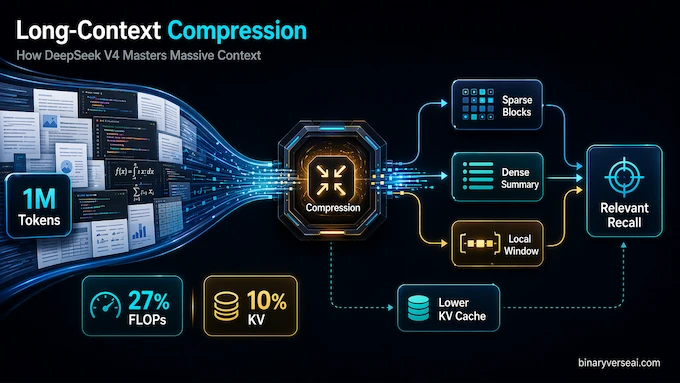

A million tokens sounds like a parlor trick until you ask what it costs. Vanilla attention gets ugly as sequences grow, because every token wants to gossip with every other token. At small sizes, this is charming. At a million tokens, it is a neighborhood meeting from hell.

DeepSeek’s answer is hybrid attention. Compressed Sparse Attention shrinks KV entries and then selects only the most relevant compressed blocks. Heavily Compressed Attention goes further, compressing larger spans but keeping dense attention over those compressed entries. Add a sliding local window so the model does not lose fine detail nearby, and you get a practical compromise: broad memory, focused lookup, local texture.

The payoff is large. In a one-million-token setting, Pro uses only 27% of the single-token inference FLOPs and 10% of the KV cache compared with DeepSeek-V3.2. Flash pushes that down to 10% of FLOPs and 7% of KV cache. DeepSeek V4 is basically saying, “What if long context stopped being a luxury yacht?”

4. DeepSeek V4 Vs Claude Opus 4.7: The Benchmark Reality

The Claude comparison needs a clean read. On knowledge-heavy tasks, Gemini still looks scary. On GPQA Diamond, DeepSeek-V4-Pro-Max scores 90.1, behind Claude Opus 4.6 Max at 91.3, GPT-5.4 xHigh at 93.0, and Gemini-3.1-Pro High at 94.3. On HLE, it also trails the best closed models.

Then coding enters the room wearing muddy boots. DeepSeek-V4-Pro-Max scores 93.5 on LiveCodeBench, 3206 on Codeforces, and 80.6 on SWE Verified. That puts it right beside the strongest closed systems in practical software work, which is the benchmark zone developers actually feel in their wrists.

The philosophical difference matters. A closed model can be brilliant and still feel like a black-box appliance. An open-weight model that lands near it on coding and agentic tasks becomes infrastructure. You can host it, route it, distill from it, fine-tune around it, and study its failure modes without begging a dashboard for permission.

5. DeepSeek API Pricing: Cheap Flash, Serious Pro

DeepSeek API pricing is no longer the cartoon-cheap story people remember from earlier releases. The official pricing page lists Flash at $0.14 per million cache-miss input tokens, $0.028 for cache-hit input tokens, and $0.28 per million output tokens. Pro is much heavier: $1.74 per million cache-miss input tokens, $0.145 for cache-hit input tokens, and $3.48 per million output tokens. (DeepSeek API Docs)

That changes how teams should use it. Flash is the default workhorse for summarization, classification, extraction, drafts, search augmentation, and the thousand little calls that quietly bankrupt sloppy AI products. Pro is for the sharp end: complex coding, hard reasoning, multi-step agents, long documents where a bad answer costs more than the tokens.

The nice pattern is routing. Send most traffic to Flash. Escalate to Pro when the task has high uncertainty, high value, or high blast radius. This is how serious teams will use DeepSeek V4: not as one model, but as a small operating system for intelligence.

6. Nvidia DeepSeek And The Huawei Hardware Turn

The Nvidia DeepSeek story used to be simple: frontier AI needs Nvidia, export controls restrict Nvidia, Chinese labs suffer. V4 makes that story less tidy. Reuters reported that Huawei’s Ascend supernode will support DeepSeek’s V4 model, and earlier reporting said V4 would run on Huawei’s latest AI chips. (Reuters)

That does not mean Nvidia is suddenly irrelevant. CUDA is not just software. It is habit, tooling, libraries, hiring pipelines, debugging lore, and a decade of engineers learning where the dragons live. You do not replace that with a press release and a logo swap.

The important shift is optionality. If DeepSeek can train and serve this class of model on domestic accelerators, China’s AI stack gets less brittle. For buyers, it means pricing pressure. For Nvidia, it means the moat is still deep, but someone has started building a bridge with a suspicious amount of concrete.

7. DeepSeek Local Requirements: Can You Run It At Home?

DeepSeek local requirements are where optimism goes to meet arithmetic. Pro has 1.6T parameters. Even at 4 bits per routed expert weight, raw storage lands around 800GB before you account for mixed precision, non-expert weights, runtime overhead, KV cache, and the fact that your machine also needs to breathe. In practice, think 800GB to 1TB of VRAM or unified memory for a sane Pro setup.

Flash is less absurd. At 284B total parameters, rough 4-bit storage starts near 142GB, and a practical deployment likely wants 160GB to 200GB once overhead is included. That puts it outside normal gaming rigs, but inside the fantasy zone for high-end Mac Studio configurations, multi-GPU workstations, and the beloved basement hydra made from used 3090s.

Could clever quantization bring it lower? Probably. Will your single 24GB card run Pro beautifully while you browse YouTube? No. The LocalLLaMA crowd will try anyway, because that is both the charm and the illness.

8. DeepSeek V4 Release Date: Preview Now, Final Shape Later

The DeepSeek V4 release date question has a practical answer: the preview is here. DeepSeek’s own site says the preview is available on web, app, and API, while Reuters describes it as a preview launch with no final release date announced. (DeepSeek)

That matters because preview releases are where models reveal their real personality. Benchmarks show ceiling. Daily use shows temperament. Does it follow messy instructions? Does it overthink simple tasks? Does the 1M context window stay coherent across actual documents, or does it merely pass synthetic needle tests with a heroic expression?

Treat this phase as a field test. Builders should evaluate it against their own workloads, not just leaderboard candy. Feed it your worst codebase, your ugliest PDF pile, your longest customer thread, and your most annoying migration plan. Models that survive real workflows deserve attention.

9. DeepSeek V4 Reasoning Modes: Speed, Thought, And Max Effort

The release defines three reasoning modes: Non-think, Think High, and Think Max. Non-think is for fast, intuitive answers. Think High is slower and more deliberate. Think Max is the “bring snacks” mode for tasks where you actually want the model to spend compute like it means it.

This is a healthy interface idea. Not every task deserves a courtroom drama. If the user asks for a regex, do not summon a council of philosophers. If the user asks for a distributed-system migration plan with data loss risk, please do not answer like a caffeinated autocomplete.

The deeper point is that test-time compute is becoming a user-facing control. Intelligence is no longer only what the model knows. It is how much search, reflection, and verification you buy at the moment of use.

10. Vision, Multimodality, And The Missing Pieces

Day one is mainly a text and code story. That is not a weakness, but it is a boundary. If your workflow depends on images, video, UI screenshots, diagrams, or document layouts, DeepSeek V4 is not yet the clean replacement for a polished multimodal enterprise stack.

The open question is not whether multimodality arrives. It almost certainly does. The question is whether it arrives with the same discipline shown in long-context efficiency. Adding vision is easy to announce and hard to make dependable. The graveyard of “multimodal demos that fail on real screenshots” is already crowded.

For now, judge this release on what it is: a serious open-weight language, code, reasoning, and long-context system. That is plenty. Not every whale needs to juggle.

11. The Final Verdict: The Open-Weight Bar Just Moved

DeepSeek V4 is not magic. It is not the death of closed models, Nvidia, API businesses, or common sense. It is something more useful: a strong, technically interesting, open-weight model family that makes frontier performance feel less gated.

Pro is the prestige model. Flash is the product model. The one-million-token context window is the architectural flex. The pricing is no longer pocket change, but it is still aggressive enough to force uncomfortable meetings elsewhere. The hardware story hints at a future where frontier AI supply chains split into competing ecosystems, each with its own chips, libraries, and economics.

The best reason to care is simple. When capable models become open, builders get leverage. Researchers get objects they can actually inspect. Startups get bargaining power. Developers get one more way to avoid waiting six months for a feature request to reach a roadmap.

So test it. Put it on real tasks. Compare Flash against your cheap calls and Pro against your hardest failures. Do not worship the whale. Make it work.

How To Run DeepSeek V4 Locally, And What Are The Hardware Requirements?

Running DeepSeek-V4-Pro locally requires data-center-level hardware, roughly 800GB to 1TB of VRAM or unified memory once weights, overhead, and cache are considered. DeepSeek-V4-Flash is more realistic, but still needs a high-end setup, usually around 160GB to 200GB of memory for practical local use.

What Is The DeepSeek V4 API Pricing Compared To V3?

DeepSeek-V4-Pro costs $1.74 per 1M cache-miss input tokens and $3.48 per 1M output tokens. DeepSeek-V4-Flash is much cheaper at $0.14 input and $0.28 output per 1M tokens. Compared with older V3 pricing, Pro is the premium tier, while Flash keeps the low-cost DeepSeek appeal.

Can I Run The Full DeepSeek Model Locally Without The Internet?

Yes, DeepSeek V4 model weights are available under an MIT License, so the model can be downloaded and run offline. The real limitation is not internet access. It is hardware. The full Pro model is far too large for normal consumer PCs, while Flash needs a serious workstation-class setup.

Does DeepSeek V4 Support Vision And Multimodality?

DeepSeek V4’s day-one release is focused on text, code, reasoning, tools, and long-context work. Do not position it as a full vision or multimodal model unless DeepSeek releases a specific multimodal checkpoint. For now, treat multimodality as a future possibility, not a confirmed launch feature.

How Does DeepSeek V4 Compare To Claude Opus 4.7 And GPT-5.4?

DeepSeek-V4-Pro-Max is one of the strongest open-weight models available, especially for coding, reasoning, and long-context tasks. Its reported SWE-bench Verified score of 80.6% and Codeforces rating above 3200 put it near frontier closed models, though Gemini and top Claude/GPT modes still lead in some knowledge and polished enterprise workflows.