An AI operating system sounds, at first, like the sort of phrase that gets shouted over synth music in a keynote and forgotten by lunch. Then you read Meta and KAUST’s Neural Computers paper and realize they mean it literally. Not “an assistant for your desktop.” Not “a smarter shell.” An AI operating system where computation, memory, and input/output stop living in neatly separated modules and get folded into one learned runtime state inside the model itself. That is a much stranger, much more ambitious claim.

Put differently, most of today’s AI is trying to learn how to drive the car. This paper asks whether the model could eventually be the car, engine, dashboard, wiring, and half the road too. That is why the AI operating system idea matters. It is not a new app category. It is a possible new computing substrate. The paper’s current prototypes are still early, still awkward, and in some places hilariously brittle. But they already manage short-horizon interface control and visual state alignment well enough to make the question feel real.

| What The Paper Claims | What It Actually Means | Why You Should Care |

|---|---|---|

| A model can act as the running computer | The system tries to internalize compute, memory, and I/O in one learned state | That is a different bet from agents using existing software |

| Early prototypes can model CLI and GUI behavior | Meta trained separate systems for terminals and desktop environments | The idea is not purely philosophical, there is working machinery behind it |

| Today’s gains are strongest in I/O alignment and short control loops | The model can often keep screen state coherent for brief sequences | Useful behavior is emerging, but it is still fragile |

| The long-term target is a Completely Neural Computer, or CNC | A mature version would need programmability, consistency, and durable reuse | This is not “Windows with a chatbot,” it is a new machine model |

Table of Contents

1. Why This AI Operating System Idea Is Different From Agents

Here is the cleanest analogy. An agent is a driver. It sits on top of an existing operating system, clicks buttons, runs tools, reads files, and hopes the road signs stay where they were yesterday. An AI operating system is a different proposal entirely. It tries to absorb the execution substrate into the model itself.

That distinction matters because people keep flattening these categories. Agents use external runtimes. World models learn environment dynamics. Conventional computers execute explicit programs. Neural Computers, in this paper’s framing, try to collapse those roles into one learned runtime that updates state and renders the next interface step. The model is not just planning actions against a separate machine. The model is trying to become the machine.

That sounds dramatic because it is. But it also clarifies the ambition. If you are just building a better Copilot, you mostly care about tool calling, latency, and error recovery. If you are chasing an AI operating system, you care about a much deeper set of questions: where persistent state lives, how behavior stays stable, what counts as “installing” a capability, and how you prevent every interaction from becoming accidental self-modification.

The paper is useful because it does not hide behind abstractions. It picks a concrete target, screen-based interfaces, and asks a very grounded question: can early runtime primitives be learned directly from raw interface I/O, without privileged access to internal program state? That is refreshingly specific. No incense. No cosmic hand-waving. Just pixels, actions, and consequences.

2. What Neural Computers Actually Are

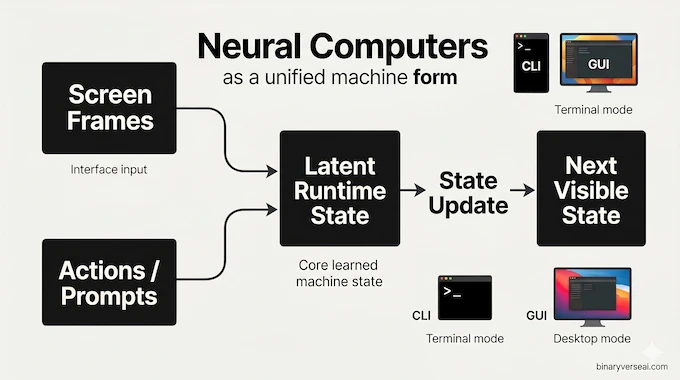

The paper’s term is Neural Computers, and the core move is simple to state even if it is hard to build. In a normal computer, compute, memory, and I/O are split across hardware, software, and system layers. In this proposal, they get unified inside a learned runtime state. The model observes the current interface, updates its hidden state, and predicts the next visible state.

In the current prototype, that means screen frames are the observable world. Actions or prompts are the conditioning stream. A latent state carries forward what the system “knows” about the current runtime. The model rolls forward from there. That makes the setup feel part world model, part interactive simulation, part systems research with a daredevil streak.

The paper studies two concrete versions. NCCLIGen handles terminal interaction. NCGUIWorld handles desktop interaction with synchronized mouse and keyboard traces. Both are open-loop prototypes trained from traces, not full live systems. Still, they show something important: if you train on enough interface behavior, a model can learn short-range runtime structure that looks surprisingly computer-like from the outside.

This is where the phrase AI operating system stops being a slogan and starts becoming architecture. The model is not executing Bash in the traditional sense. It is learning the visible logic of a Bash session well enough to roll out coherent future frames. Same for desktop behavior. Hover, click, menu transition, pointer motion. That may not sound like much until you notice how much of day-to-day computing is exactly that: a long chain of interface state transitions glued together by memory.

3. The Current Prototype Is Basically A Very Ambitious Video Model

Now for the awkward part. Yes, today’s AI operating system prototype is, in practice, heavily built on video-generation machinery. If your first reaction is, “Wait, is this just hallucinating a desktop from pixels?” the honest answer is, “For now, kind of.”

The CLI model is trained to roll out terminal frames from a prompt and an initial frame. The GUI model uses synchronized RGB frames plus action traces. The backbone comes from a strong video model, then gets augmented with interface-specific conditioning and action modules. In the paper’s own framing, this is the practical substrate available right now, not necessarily the final form of the idea.

But dismissing it as “just video” misses the interesting part. The point is not photorealism. The point is learned runtime continuity. Can the model keep terminal geometry stable? Can it maintain cursor placement? Can it preserve short-term interface context well enough that clicks produce plausible visual consequences? In controlled settings, the answer is increasingly yes.

That is why the CLI results are more than a gimmick. The model often stays aligned with terminal buffers, prompt wrapping, scrollback behavior, and short command chains. The GUI results show coherent pointer dynamics and short-horizon action responses. Not because the model has suddenly become a symbolic operating system in the classic sense, but because it has learned enough interface physics to imitate one for a while.

And that “for a while” matters. Progress in computing often begins with systems that are obviously incomplete but directionally undeniable. The first web browser was not the modern web. Early GPUs were not general-purpose accelerators. A prototype AI operating system does not need to replace Linux next year to be worth taking seriously. It only needs to show that some of the boundaries we assumed were fixed might actually be learnable.

4. The Determinism Problem Is Not A Nitpick

This is the part the critics are right to hammer. Computers are valuable because they are boring in exactly the right ways. You type 10+15, the machine should not enter a period of spiritual exploration. It should return 25. Determinism is not an aesthetic preference. It is the foundation of trust.

That is why any AI operating system has to face the ugly question early: what happens when the runtime is probabilistic? The paper does not duck that. It openly says symbolic reliability, stable capability reuse, and runtime governance remain unresolved. Good. Pretending otherwise would make the whole exercise feel unserious.

A learned runtime can be impressive and still be unacceptable as infrastructure. Your operating system is not allowed to be “creative” about filesystems, process state, or arithmetic. That is the central tension here. Neural systems are good at smooth interpolation and pattern absorption. Computers are good at exactness, isolation, and repeatable contracts. An AI operating system has to somehow earn both skill sets without collapsing into a weird midpoint that is bad at everything important.

4.1 The 4% Math Failure And The 83% Fix

The paper’s arithmetic probe is the moment where the dream meets a brick wall. On held-out CLI math tasks, the NC prototype scored 4 percent. That is not “room for improvement.” That is “you do not yet have a trustworthy computer.” Then, with stronger reprompting and system-level conditioning, performance jumped to 83 percent.

That jump is fascinating, and a little dangerous to interpret.

The optimistic read is that conditioning interfaces matter a lot, and symbolic behavior may improve dramatically when the system is scaffolded correctly. The less flattering read is that the current AI operating system is not really doing math natively. It is being steered into rendering the right answer more reliably. The paper leans toward that second interpretation, and I think that is the honest call.

This does not make the result unimportant. It tells us where the leverage is. Right now, these systems look stronger as conditionable interface models than as native symbolic machines. That still matters. Plenty of real computing value comes from getting state tracking, execution context, and control surfaces right. But it also means we should stop confusing better prompting with solved computation. The scoreboard got better. The underlying ontology did not magically settle itself.

5. Differentiable Neural Computers Vs. The New AI Operating System Dream

The comparison to Differentiable Neural Computers is essential because it shows both continuity and rupture.

DeepMind’s DNC line kept a neural controller but paired it with explicit external memory machinery. The controller decided what to read and write. Specialized submodules tracked usage and temporal linkage. In other words, it was a neural system with a fairly legible memory architecture. The new Neural Computer vision pushes harder. Instead of “neural controller plus external memory,” it aims for runtime state itself as the computer.

| System | Core Idea | Memory Style | Main Strength | Main Limitation |

|---|---|---|---|---|

| Differentiable Neural Computers | Neural controller with structured external memory | Explicit memory matrix with read/write mechanisms | More interpretable memory access and procedural structure | Still not a full learned runtime for general computing |

| Neural Computers, current paper | Learned runtime over interface traces | Latent runtime state carried through the model | Stronger unification of compute, memory, and I/O as one machine form | Weak symbolic reliability, short horizons, fragile reuse |

| Completely Neural Computers, target vision | General-purpose neural machine | Unbounded effective memory in principle, explicit run/update discipline | Could become programmable, reusable, and self-contained | Entirely aspirational today |

That difference is not cosmetic. DNCs were asking, “Can neural networks use memory more like data structures?” The new proposal is closer to asking, “Can the whole computer become a learned state machine?” Same family tree, bigger appetite.

This is also where the paper’s references to fast weight programmers, Neural Turing Machines, and Differentiable Neural Computers matter. The authors are not claiming a clean rupture from history. They are placing themselves in a long argument about how much of computation can migrate into learned systems. That is the right frame. Revolutionary papers are usually less “lightning from nowhere” and more “old obsessions returning with more compute and better tools.”

6. The Schmidhuber Thread Is Not A Side Plot

You cannot read this paper without noticing Jürgen Schmidhuber on the author list and throughout the intellectual background. That alone guarantees debate, and honestly, fair enough. The field has a habit of rediscovering old ideas, giving them new clothes, and acting shocked when the elders clear their throats.

The interesting question is not “who gets the single golden patent on the future.” The interesting question is which old ideas have become materially more plausible now. The paper explicitly situates itself near fast weight programmers, world models, Neural Turing Machines, and Differentiable Neural Computers. It also mentions systems like NeuralOS as part of the broader trajectory toward model-shaped runtimes and interface conditioning.

So yes, there is lineage here. And yes, some of today’s discourse behaves as if every concept was born the day it got a viral thread. But the real contribution of this paper is not that it discovered the thought “maybe computation can be neural.” People have been circling that thought for decades. The contribution is that it pushes the idea into a new systems frame, then tries to prototype it using actual interface traces at scale.

That is how progress usually works. Not as pure novelty, but as a change in what can be demonstrated rather than merely argued.

7. How To Make An AI Operating System

If you ask how to make an ai operating system, the paper’s answer is not “train a chatbot and call it a day.” It sketches a harder recipe.

First, you need a runtime state that actually persists useful computational context. Second, you need a clean distinction between running a capability and updating a capability. Third, you need something like universal programmability, where input sequences can reconfigure the system into new functional states. Fourth, you need behavior consistency, meaning the machine does not quietly mutate under ordinary use. The paper also argues that a true CNC would need unbounded effective memory in principle, not just a fixed-size hidden state pretending very hard.

That run-versus-update split is the sneaky important part. Traditional software has a decent answer to this already. Running a program is not the same as rewriting the operating system. A real AI operating system would need an equally crisp contract. Otherwise every interaction becomes a low-grade act of self-editing, which is fine for improvisational art and catastrophic for infrastructure.

This is where the paper starts to feel less like a flashy demo and more like systems philosophy. The hard problem is not only getting the model to do useful things. It is defining what counts as persistence, what counts as explicit reprogramming, and what evidence can be replayed or rolled back when behavior changes. That is not a model eval footnote. That is the whole game.

8. Why An AI Operating System For PC Is Still Far Away

The dream of an AI operating system for PC is easy to picture. Your machine stops being a pile of apps, drivers, queues, windows, and services. Instead it becomes a learned environment that can absorb tasks, remember workflows, and fluidly reshape its own interfaces around intent.

Great fantasy. Very expensive reality.

An AI operating system for PC would need to beat existing operating systems on the stuff users rarely praise because it already works: reliability, latency, isolation, rollback, compatibility, security, and predictability. It is not enough for the system to look intelligent. It has to survive real abuse. Broken USB devices. Corrupt files. Malicious inputs. Weird edge cases. Overnight updates. Enterprise policy. Ten-year-old printers. The glamorous future always forgets the printers.

There is also the memory problem. The paper notes that a complete system would require effective unbounded memory for true universality. That is not a small engineering detail. That is one of the central reasons the current prototype is still a research artifact rather than an executable future. Add to that long-horizon reasoning, stable skill reuse, and governance of updates, and you can see why the CNC remains a roadmap rather than a product.

Still, “far away” does not mean “fake.” The better interpretation is that the paper is opening a second route forward. The mainstream route says AI will sit atop conventional computers as an agent layer. This paper says there may be another route where the substrate itself becomes learned. That is a real fork in the road.

9. Will It Reach Your PC?

So, will an AI operating system replace Windows, macOS, or Linux on your desk anytime soon? No. Not even close. The current prototypes are promising in the way a rocket engine on a test stand is promising. Loud, impressive, clearly important, not yet what you commute in.

But the idea is alive now in a way it was not a few years ago. The paper shows that early runtime primitives can be learned from interface traces. It shows that short-horizon control is not science fiction. It also shows, with admirable honesty, exactly where the floor still gives way: symbolic reliability, durable memory, capability reuse, and explicit runtime governance.

That is enough to make this more than a curiosity. The real value of the AI operating system thesis is that it forces us to ask a better question than “How do we make agents click faster?” The better question is, “What parts of the computer stack are truly essential, and what parts are historical packaging that a learned system might eventually absorb?”

My guess is that the near future belongs to hybrids. Conventional operating systems will stay. Agents will get better. Neural runtimes will sneak in through narrow, high-value surfaces first. Maybe developer environments. Maybe interface simulation. Maybe deeply adaptive personal workflows. Then, one day, the stack stops looking layered and starts looking learned.

That is the story to watch. Not whether this week’s demo can open Calculator, but whether researchers can turn these brittle, intriguing prototypes into systems that deserve trust. Read the papers in this space. Track the failures, not just the sizzle. That is where the next real platform shift will announce itself.

What is an AI operating system?

An AI operating system is a computing model where intelligence is part of the runtime itself, not just an app layered on top. In the Neural Computer vision, computation, memory, and input/output are folded into one learned system, so the model does not simply use the operating system, it behaves like the operating system.

How is a Neural Computer different from an AI agent?

A Neural Computer is different because an AI agent still works inside an external environment like Windows, Linux, or a browser. The agent is the driver. The Neural Computer removes that split. Its own internal runtime state holds the memory, control logic, and interface behavior at the same time.

Can Neural Computers do exact math, or are they non-deterministic?

Not reliably yet. Early Neural Computer prototypes struggled on simple arithmetic because neural models are probabilistic and learned, not symbolically exact by default. Prompt conditioning improved results a lot, but that is still different from deterministic execution, which remains one of the biggest open problems.

What is a Differentiable Neural Computer (DNC)?

A Differentiable Neural Computer, or DNC, is an earlier DeepMind architecture that combines a neural network with an external read-write memory matrix. Neural Computers go further. Instead of attaching memory to the model, they aim to make the learned runtime itself carry memory, control, and interface state.

How do Neural Computers simulate a desktop interface?

Current Neural Computer prototypes learn from screen recordings plus mouse and keyboard traces. Given the last screen state and the latest user action, the model predicts the next frame. That lets it simulate a terminal or desktop visually, even when no traditional software code is being executed underneath.