Introduction

If you’ve spent five minutes around finance Twitter, you’ve seen the pitch: an LLM whispers a ticker, a direction, and a tidy probability, and you retire before lunch.

Reality is less cinematic. Markets punish confidence that isn’t earned. And the awkward truth about AI for stock prediction in 2026 is that the “smartest-sounding” model can be the most dangerous one, not because it’s dumb, but because it doesn’t know when it’s guessing.

A recent research paper introduced KalshiBench, a benchmark built from real prediction market questions that resolved after major model knowledge cutoffs, so models can’t simply remember the answer. The punchline is blunt: frontier models are systematically overconfident, and extended reasoning can make that problem worse. All of the benchmark facts and numbers in this post come from that paper.

This post is a practical read for anyone who cares about AI for stock prediction, especially if you’re shopping for a free dashboard, a shiny app, or an “AI picks” newsletter that swears it has cracked the code.

We’ll translate the paper into trader-friendly language, then end with a checklist you can use before you trust any AI for stock prediction output with real money.

Table of Contents

1. Stock Prediction With AI In 2026, What “Best” Should Mean

People ask for the “best” model like they’re choosing a vacuum cleaner. Great suction, good reviews, done.

Forecasting is different. “Best” is not the model that produces the most confident answer. It’s the model that makes confidence feel boring, almost annoying, because it matches reality.

When we talk about AI for stock prediction, we usually mean one of two things:

- Directional calls, up or down.

- Probabilities, “70% chance this beats earnings,” “85% chance this breaks support.”

Direction is hard. Probabilities are harder, because probabilities are promises. If you say “90%,” you’re telling the reader: “In a big pile of similar situations, I’m wrong about one time out of ten.” If you can’t keep that promise, your 90% is just marketing.

1.1 The Three-Part Definition Of “Best”

Here’s the definition I’d actually use if I were judging AI for stock prediction systems like an engineer, not like a hype merchant.

AI for Stock Prediction, What “Best” Means

A practical definition: accuracy, calibration, and discipline.

| What “Best” Means | Why You Should Care | What It Looks Like In Practice |

|---|---|---|

| Accuracy | You want fewer wrong calls | Beats simple baselines on out-of-sample data |

| Calibration | You want the probabilities to mean something | 70% confidence is right about 70% of the time |

| Discipline | You want fewer “blown account” moments | The system refuses to cosplay certainty |

Accuracy is the headline stat, but calibration is the part that decides whether a probability is useful or weaponized.

2. The New Data, What KalshiBench Actually Tests

Most benchmarks are static. They test whether the model knows facts that existed when it was trained. That’s fine for trivia. It’s not fine for forecasting.

KalshiBench is different. It uses resolved prediction market contracts from Kalshi, a regulated exchange, and then filters questions so the outcomes happen after the latest model cutoff. The evaluation sample is 300 questions, across 13 categories, and it’s built to eliminate the “I memorized this” cheat mode.

That matters for AI for stock prediction because the core failure mode is the same: models are excellent at sounding informed, even when they have no legitimate access to future outcomes.

2.1 Why Prediction Markets Are A Great Stress Test

Prediction markets have two properties I love:

- They’re forced to resolve. Reality shows up with receipts.

- Questions are framed like decisions. “Will X happen by Y?” not “Explain X.”

That’s close to how traders think. Even if you never touch Kalshi, the structure maps well onto finance.

3. How The Paper Prevents Cheating, Temporal Filtering And Cutoffs

The key move is temporal filtering. The dataset keeps only markets that close after the maximum knowledge cutoff among the evaluated models. In this study, the effective cutoff is October 1, 2025.

So when a model answers, it can’t rely on “I saw this in training.” It has to forecast.

That’s the right kind of pressure if you’re trying to learn anything useful about AI for stock prediction.

3.1 This Is What “Unknown” Actually Means

The paper is basically asking: when an LLM says “I’m 80% sure,” does that number track the real world, or does it track the model’s own vibes?

This distinction sounds philosophical until you lose money on it.

4. Models Evaluated, No Cherry-Picking

The authors evaluate five frontier models: Claude Opus 4.5, GPT-5.2-XHigh, DeepSeek-V3.2, Qwen3-235B-Thinking, and Kimi-K2.

This matters because online forecasting discourse loves a single screenshot. One model dunked on one question. A thousand retweets. Nobody asks about distributions.

KalshiBench is the opposite. It’s 300 questions. You get to see patterns.

4.1 A Note On “Reasoning Modes”

One evaluated variant is GPT-5.2-XHigh, an extended reasoning mode. The common assumption is “more reasoning equals better judgment.” The paper tests that assumption directly.

Spoiler: it doesn’t go the way people want.

5. What They Measured, The Metrics Traders Actually Need

The study reports accuracy and a set of calibration metrics. The two big ones are:

- Brier score, a squared error for probability forecasts, lower is better.

- Expected Calibration Error (ECE), the average gap between confidence and accuracy, lower is better.

It also reports Brier Skill Score (BSS), which answers a surprisingly savage question: “Do you beat the dumb baseline that always predicts the base rate?”

This is the metric I wish every AI for stock prediction product had to publish on the front page.

5.1 Calibration, In One Sentence

If a model says “90%” and it’s wrong 30% of the time, you don’t have a predictor. You have a confidence generator.

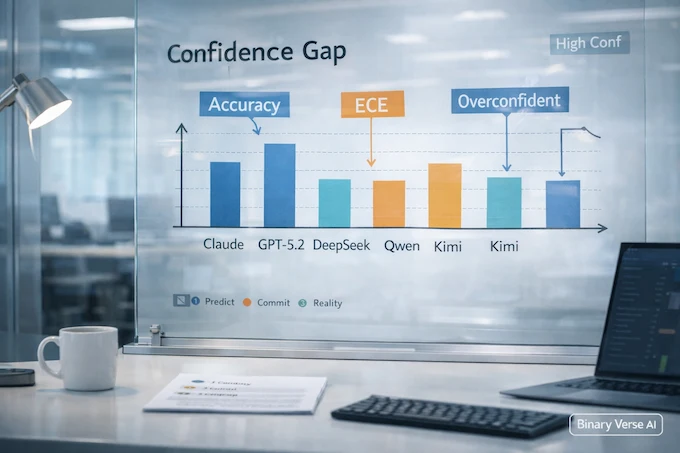

6. Headline Finding, Frontier Models Are Systematically Overconfident

Here’s the uncomfortable part. Accuracy sits in a fairly tight band, about mid-60s to high-60s. Calibration varies wildly.

ECE ranges from 0.120 to 0.395 across the models, meaning the average confidence-accuracy gap can be three times worse depending on which model you use.

If you’re using AI for stock prediction, this is the trap. You can get similar hit rates from different systems while getting radically different risk, because the risk is hidden inside the confidence.

6.1 The Table That Should Make You Pause

Below is the kind of table I wish showed up in every “best ai stock predictor” landing page. It’s not a vibe check. It’s the boring accounting.

AI for Stock Prediction, Model Calibration Scorecard

Accuracy and uncertainty quality side by side, from KalshiBench-style metrics.

| Model | Accuracy | ECE (Lower Is Better) | BSS (Higher Is Better) | Wrong When 90%+ Conf |

|---|---|---|---|---|

| Claude Opus 4.5 | 69.3% | 0.120 | 0.057 | 20.8% |

| DeepSeek-V3.2 | 64.3% | 0.284 | -0.407 | 14.7% |

| Kimi-K2 | 67.1% | 0.298 | -0.446 | 31.1% |

| Qwen3-235B-Thinking | 65.7% | 0.297 | -0.437 | 32.4% |

| GPT-5.2-XHigh | 65.3% | 0.395 | -0.799 | 27.7% |

Numbers are from the KalshiBench evaluation.

Claude Opus 4.5 is “best” here not because it’s magically accurate, but because it’s the least misleading about its own uncertainty.

7. GPT-5.2 Vs The Pack, When “More Reasoning” Breaks Trust

GPT-5.2-XHigh lands near the pack on accuracy. But on calibration, it’s the worst.

Its Brier Skill Score is deeply negative. Its ECE is 0.395. So why does extended reasoning make things worse?

The paper points to a very human failure: confirmation bias. Longer chains can turn into a persuasive essay for the first guess, not a genuine search for what’s true.

That maps cleanly onto AI for stock prediction workflows. You prompt the model with a narrative, the model writes a better narrative, and suddenly you feel smarter. Feeling smarter is not a trading edge.

7.1 A Weird Litmus Test

If the model’s “reasoning” reads like it’s trying to win a debate, you should treat its probability like a sales pitch.

Good forecasters don’t argue. They update.

8. The Part Traders Miss, 90% Still Means “Wrong A Lot”

Table 5 in the paper is quietly terrifying. Models average very high confidence, and they stay confident even when wrong.

At the 90%+ confidence threshold, models are still wrong roughly 15% to 32% of the time, depending on the model.

That’s the blown-account mechanism. Not the normal loss you planned for, the “I sized up because it was 95%” loss.

If you’re building or buying AI for stock prediction tools, this is the metric to tattoo on your spec doc.

8.1 The Paper Even Told The Models To Behave

This is my favorite detail. The evaluation prompt explicitly tells the model: be calibrated, if you’re 70% confident you should be right 70% of the time. The models still fail.

So no, you can’t prompt-engineer your way out of this.

9. Does This Transfer To Stock Prediction, Similarity And Limits

KalshiBench is future-event forecasting. Stocks are future-event forecasting too, except the event is adversarial, reflexive, and noisy.

So yes, the calibration lesson transfers. If an LLM can’t reliably map its confidence to reality on clean yes-no questions, you should assume it won’t magically become calibrated when you ask for price direction.

That said, the paper also gives you a reason not to overclaim: the “Financials” category in the evaluation sample is tiny, only 8 questions.

So this is not “it proved Claude is best at stocks.” It’s “it proved that most LLM confidence is too spicy for high-stakes forecasting.”

9.1 The Practical Translation For Model Confidence

- Treat raw probabilities as untrusted until you calibrate them yourself.

- Treat “reasoning mode” probabilities as extra untrusted.

- Treat any AI for stock prediction demo that never shows calibration metrics as a product that is optimizing for persuasion.

10. A Practical Way To Use LLMs Without Getting Fooled

Here’s the sanity-preserving approach to AI for stock prediction: use the model as a thinking partner, not as an oracle.

- Summarize earnings calls, filings, and news with citations.

- Generate hypotheses you can test, like “what would need to be true for this margin expansion story to hold?”

- Build scenario trees, bull case, base case, bear case, with explicit assumptions.

- Red-team your thesis, “what’s the simplest way this blows up?”

Bad uses:

- “Give me the probability that XYZ goes up tomorrow.”

- “Pick three stocks with 90% confidence.”

- “Backtest this strategy” without real data and slippage modeling.

The paper’s deployment advice is blunt: don’t trust high-confidence predictions, and don’t assume more reasoning helps.

10.1 Calibration Is A Workflow, Not A Prompt

If you want AI for stock prediction probabilities you can actually use, you need an out-of-sample calibration loop:

- Collect predictions with timestamps.

- Lock the target definition, what counts as “up,” what horizon.

- Score with a proper rule, Brier score is a solid default.

- Apply post-hoc calibration on a held-out set, temperature scaling, isotonic, or something domain-specific.

This is boring. Boring is good.

11. AI Stock Prediction Tool, Website, App, The Checklist That Matches Buyer Intent

This is the section for the commercial SERP crowd, for people buying AI for stock prediction. If you’re shopping for an ai stock prediction tool, an ai stock prediction website, an ai stock prediction app, or a “free ai stock picker” that promises edge, here’s what you demand before you trust it.

11.1 What A Legit Product Should Show You

- Live track record, time-stamped, not cherry-picked.

- Out-of-sample protocol, walk-forward, no peeking.

- Baselines, including “predict the base rate” and “do nothing.”

- Calibration reporting, not just accuracy.

- Costs, including fees, spreads, and realistic slippage.

- Failure cases, a gallery of confident wrong calls.

If a vendor can’t show this, it’s not an AI for stock prediction product. It’s content marketing with a chart.

11.2 The “Free” Trap

Search intent is full of “free ai stock analysis” and “free ai stock prediction.” Free can be fine, but free usually means you are the product. The system optimizes for engagement. Engagement likes certainty.

If you want a free tool, fine. Just treat it like a weather app: useful for orientation, dangerous for making leveraged decisions.

And if the site claims it’s the “best ai stock predictor,” ask for ECE and Brier Skill Score. Watch how fast the conversation changes.

12. Verdict, The “Best” Model Knows What It Doesn’t Know

KalshiBench doesn’t tell you how to trade. It tells you something more valuable: confidence from frontier LLMs is not yet a reliable instrument.

Claude Opus 4.5 comes out looking “best” on this benchmark because it’s the least overconfident and the only model that barely beats the base-rate baseline on Brier Skill Score. That is not a trophy. It’s a warning label with a slightly nicer font.

So what should you do with LLM-based stock forecasting in 2026?

- Use LLMs to widen your search, sharpen your questions, and compress information.

- Refuse to treat a model’s raw probability as trade sizing input until you’ve calibrated it on your own data.

- Prefer systems that publish calibration metrics, even when they look bad.

- Build a small internal KalshiBench-style harness for your own forecasts, then score it.

If you’re building tools, take this as a product spec. If you’re buying them, take it as a filter. Either way, you’ll waste less time, lose less money, and stop confusing articulate text with epistemic humility.

Want to do this the right way? If you care about AI for stock prediction, build a tiny evaluation loop this week. Pick 50 yes-no forecasts you genuinely don’t know, log the model’s probabilities, then score them when they resolve. Once you’ve seen your own reliability curve, you’ll never read an AI for stock prediction landing page the same way again.

Can you use AI to predict stocks?

You can use AI to estimate probabilities, spot patterns, and stress-test a thesis, but stock “prediction” is probabilistic, not certain. The real risk is confidence that sounds precise but isn’t calibrated. Treat AI outputs as inputs to a decision process, not the decision.

Can AI suggest stocks to buy?

Yes, AI can suggest candidates by screening, summarizing earnings, comparing metrics, and flagging narrative risks. But it shouldn’t be the final decision-maker. Require a clear thesis, a defined time horizon, and risk controls, then verify with data before you place a trade.

Which AI is best for prediction?

It depends on what “best” means. On KalshiBench, the strongest mix of accuracy and calibration was Claude Opus 4.5 (Accuracy 69.3%, ECE 0.120). GPT-5.2-XHigh had similar accuracy but the worst calibration (ECE 0.395), so its confidence was least trustworthy.

What is the best AI for stock analysis?

For analysis, LLMs shine at summarizing filings and news, extracting drivers, and generating scenario trees. For prediction, you want a calibration-first workflow: log probabilities over time, compare against baselines, and score reliability. “Smart explanations” matter less than trustworthy uncertainty.

How accurate is AI in stock trading?

Accuracy varies by market regime, costs (spreads, slippage), and evaluation quality. A key warning sign from KalshiBench is that most models did not beat a simple base-rate baseline on probabilistic skill. If a “free ai stock prediction” site won’t show audited results, treat it as research, not a signal.