Every so often, a model release lands with the usual confetti, leaderboard screenshots, and claims that sound suspiciously like they were approved by three layers of marketing. Gemma 4 feels different. Not because the hype is bigger, but because the pitch is finally practical. Gemma 4 is not trying to be a distant moonshot that lives on rented GPUs in someone else’s data center. It is trying to be useful on your hardware, in your workflow, with your code, documents, tools, and local constraints.

That is why the release caught fire so quickly. Gemma 4 arrives with four sizes, serious coding and reasoning gains, long context, multimodal support, native system prompts, and an Apache 2.0 license that people can actually build businesses around. The gemma 4 release date, April 2, 2026, matters because it marks the moment Google stopped treating open weights like a side project and started treating them like infrastructure.

And yes, the benchmarks are strong. But the more important story is this: Google Gemma 4 looks like one of the first open model families designed around how developers actually work in 2026, not how model launches look on a slide.

Table of Contents

1. Benchmarks First, Victory Lap Later

The benchmark table tells a simple story. Gemma 4 is not winning because it is massive. It is winning because it squeezes an unusual amount of capability out of its size. That matters more than another flashy “bigger than ever” release. In local AI, efficiency is not a footnote. It is the whole game.

Here are the full benchmark figures from the source material.

| Benchmark | Gemma 4 31B | Gemma 4 26B A4B | Gemma 4 E4B | Gemma 4 E2B | Gemma 3 27B |

|---|---|---|---|---|---|

| MMLU Pro | 85.2% | 82.6% | 69.4% | 60.0% | 67.6% |

| AIME 2026 No Tools | 89.2% | 88.3% | 42.5% | 37.5% | 20.8% |

| LiveCodeBench v6 | 80.0% | 77.1% | 52.0% | 44.0% | 29.1% |

| Codeforces ELO | 2150 | 1718 | 940 | 633 | 110 |

| GPQA Diamond | 84.3% | 82.3% | 58.6% | 43.4% | 42.4% |

| Tau2 Avg. | 76.9% | 68.2% | 42.2% | 24.5% | 16.2% |

| HLE No Tools | 19.5% | 8.7% | – | – | – |

| HLE With Search | 26.5% | 17.2% | – | – | – |

| BigBench Extra Hard | 74.4% | 64.8% | 33.1% | 21.9% | 19.3% |

| MMMLU | 88.4% | 86.3% | 76.6% | 67.4% | 70.7% |

| MMMU Pro | 76.9% | 73.8% | 52.6% | 44.2% | 49.7% |

| OmniDocBench 1.5, lower is better | 0.131 | 0.149 | 0.181 | 0.290 | 0.365 |

| MATH-Vision | 85.6% | 82.4% | 59.5% | 52.4% | 46.0% |

| MedXPertQA MM | 61.3% | 58.1% | 28.7% | 23.5% | – |

| CoVoST | – | – | 35.54 | 33.47 | – |

| FLEURS, lower is better | – | – | 0.08 | 0.09 | – |

| MRCR v2 8 Needle 128k Avg. | 66.4% | 44.1% | 25.4% | 19.1% | 13.5% |

The big headline is obvious: the 31B and 26B models punch way above their weight. The more interesting detail is how broad the gains are. Coding, reasoning, multimodal tasks, long-context retrieval, even vision-heavy benchmarks, the family is not narrowly optimized for one benchmark genre. That makes the results harder to dismiss.

Still, benchmarks are not real life. They are weather forecasts. Useful, directional, and occasionally wrong in spectacular ways. What gives these numbers real bite is that they line up with the developer reaction: people immediately started talking about local coding agents, not just leaderboard screenshots. That usually means a release has crossed the line from “impressive” to “usable.”

2. Decoding The Lineup Without The Buzzword Fog

The naming looks slightly cryptic until you translate it into deployment logic.

The E2B and E4B models are the edge-first variants. The “E” stands for effective parameters, which is Google’s way of saying these models are engineered to behave larger than their active footprint suggests. These are the models aimed at phones, Raspberry Pi class devices, and lightweight laptops. They also get native audio, which is a bigger deal than it sounds. A local model that can listen, transcribe, and reason without routing everything through cloud APIs suddenly becomes interesting for voice interfaces, field tools, and offline assistants.

Then there is the 26B A4B model, the one many developers will probably end up loving most. It is a Mixture of Experts design with roughly 25.2B total parameters and about 3.8B active during inference. In plain English, it behaves like a larger model while spending compute more like a much smaller one. That is the sweet spot for anyone chasing fast local inference without feeling like they downgraded their brain.

The 31B dense model is the heavyweight. It is the one you reach for when raw quality matters more than token speed, when you want the strongest reasoning, or when you plan to fine-tune seriously. If the 26B is the practical engineer, the 31B is the slightly obsessive one who keeps refactoring your abstractions at 2 a.m. and is annoyingly often correct.

All four models share the broader identity of Gemma 4: long context, multimodal support, stronger coding, and better agent scaffolding. The difference is not whether they are capable. It is where you want the tradeoff between speed, memory, and absolute quality.

3. Gemma Vs Qwen, And The New Shape Of Local Competition

A year ago, the “best local LLM models” conversation felt messy. Plenty of models were good at one thing, weak at another, or burdened by licensing that made commercial teams nervous. Now the field is starting to look like a real market, and Gemma 4 has shoved itself right into the center of it.

The obvious comparison is Gemma vs Qwen. That debate exists for a reason. Both families matter, both have serious open-model credibility, and both aim at the same crowd: developers who want strong reasoning and coding without being permanently attached to an API bill. The difference is that Gemma 4 feels unusually balanced. It is not only about raw benchmark bragging rights. It combines strong scores, multimodal breadth, long context, tooling support, and a commercially permissive license in one package. That full-stack practicality is hard to beat.

For coding, that matters even more. The phrase best local LLM for coding gets thrown around too casually, but here it feels earned, or at least honestly debatable. The 31B posts very strong LiveCodeBench and Codeforces numbers. The 26B A4B looks even more intriguing for day-to-day work because speed matters when you are actually iterating. Nobody wants a local coding assistant that thinks deeply for 40 seconds and then autocomplete-suggests a bug with great confidence.

What makes this release especially compelling is that it supports the hybrid workflow many developers already use. Keep the heavy frontier models for occasional architecture reviews, massive context synthesis, or big design leaps. Use Gemma 4 locally for code edits, repo exploration, tool use, structured outputs, and day-long iteration. That is a sane division of labor. It is also probably where the market is heading.

4. The Quietly Important Stuff, System Prompts, Thinking, And Agents

Some model releases win attention with dramatic features. Others win loyalty by fixing the annoying stuff. Gemma 4 does both, but the second category may matter more over time.

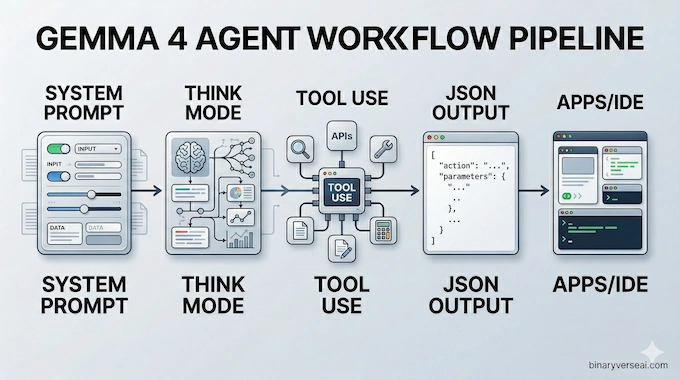

Native system prompt support sounds boring until you remember how many local-model workflows have been built on template hacks, prompt gymnastics, or wrapper-layer duct tape. Having proper support for system, user, and assistant roles makes the model easier to steer, easier to embed, and much less irritating inside real applications.

Then there is the built-in thinking mode. The <|think|> mechanism gives developers a more explicit handle on when the model should reason step by step. That does not magically solve reasoning, and it should not be romanticized. Hidden chain-of-thought tokens are not philosophy, they are plumbing. But good plumbing matters. It makes agentic workflows more controllable, especially when paired with structured outputs and function calling.

That agent story is where Gemma 4 gets serious. Native JSON output, tool use, longer context, and better instruction following mean it is not just a chatbot with good manners. It is a much better substrate for real systems. You can wire it into search, docs, IDE actions, internal APIs, and automation layers without feeling like you are building on top of a moody autocomplete engine.

This is also the point where the January 2025 knowledge cutoff stops sounding dramatic. A local model with tools is not trapped in its cutoff date. It is constrained by the quality of the tools you give it. That is a healthier way to think about open models in 2026.

5. Apache 2.0 Changes The Mood Completely

Licensing is the least glamorous part of model discourse and one of the most important. People love to talk about tokens per second and forget that a weird license can kill a project faster than slow inference ever will.

The Apache 2.0 shift is a major reason Gemma 4 matters. It changes the emotional temperature of the release. Instead of wondering what hidden restriction might surface later, startups and independent developers can plan around it with much more confidence. That kind of certainty is underrated. It lets people build products, not just experiments.

There is also a cultural effect. Open models do not thrive only because a lab publishes weights. They thrive because the community feels invited to do something audacious with them. Fine-tunes, quantizations, domain adaptations, agent stacks, local IDE tools, specialized multimodal apps, all of that accelerates when the legal ground feels stable.

That is why the local community reacted the way it did. Not with polite applause, but with immediate deployment energy. People were not saying, “Interesting paper.” They were saying, “Can this replace part of my current stack by the weekend?” That is the right question. It is also the question that separates a respectable release from a consequential one.

6. The Hardware Reality, Which Model Should You Actually Run?

This is where the romance ends and the GPU budget begins. The good news is that Gemma 4 looks thoughtfully sized. The better news is that the family spans everything from mobile-class use cases to serious workstations.

| Model | Context | Modalities | Local Fit | Approx. Practical Target |

|---|---|---|---|---|

| E2B | 128K | Text, Image, Audio | Edge and light laptops | Phones, Raspberry Pi, 8GB class devices |

| E4B | 128K | Text, Image, Audio | Better edge quality | Stronger ultrabooks, small local assistants |

| 26B A4B | 256K | Text, Image | Best speed-to-power balance | Q4 quant around 13GB VRAM |

| 31B Dense | 256K | Text, Image | Max local quality | Q4 quant around 17GB VRAM |

If you want my blunt advice, start with the 26B A4B. For most developers, it is the most exciting part of the lineup. It looks like the practical center of gravity for local coding, agent loops, and long-context work. Fast models get used. Slow models get admired.

The 31B dense model is what you try when you care about best-possible output and have the hardware to support it. It is the “I want the strongest open option on my desk” choice. The E2B and E4B models are more interesting than their size suggests because they point toward a future where local assistants are not just toys, they are embedded utilities that can hear, see, and respond with minimal latency.

That spread is why Gemma 4 stands out. It is not one heroic model. It is an actual family with clear deployment lanes.

7. Gemma 4 Ollama, Hugging Face, And The Practical On-Ramp

A lot of people searching gemma 4 ollama or gemma 4 hugging face are really asking a simpler question: is this annoying to run? From the source material, the answer looks pleasantly dull, which is exactly what you want.

Support landed across the usual ecosystem suspects: Transformers, Hugging Face, llama.cpp, MLX, Ollama, LM Studio, vLLM, and more. That matters because infrastructure friction kills curiosity. If a model is strong but awkward, people benchmark it once and move on. If it loads cleanly in familiar tools, it becomes part of someone’s daily loop.

7.1 The Fast Path

If you are a Python person, load it through Transformers and start there. If you live in desktop tooling land, grab a quant and test it in LM Studio or Ollama. If your question is whether the GGUF ecosystem will catch up, history suggests it will move at internet speed whenever a release feels worth the trouble.

The more interesting point is not that Gemma 4 can run in all these places. It is that the model design now matches the deployment ecosystem. Long context, tool use, system prompts, structured outputs, and coding strength make much more sense when they are paired with easy local runners. That is how a model escapes the benchmark lab and becomes a workstation habit.

8. Final Verdict, The Best Open Release Is The One You Actually Use

The smartest thing about Gemma 4 is that it does not ask you to believe in a fantasy. It does not claim local models have solved everything. It does not pretend a January 2025 cutoff is irrelevant in all cases. It does not erase the fact that frontier cloud models still have advantages.

What it does offer is something more valuable: a credible local-first stack with strong reasoning, strong coding, real multimodal utility, long context, cleaner agent support, and a license that does not make builders nervous. That combination is rare. As Google announced, this release marks a significant step forward for the open-weights ecosystem.

So, is this the best local LLM for coding right now? For a lot of developers, yes, or close enough that the difference stops mattering and the workflow starts mattering more. If you want maximum quality, try the 31B. If you want the best balance of speed, capability, and day-to-day usefulness, the 26B A4B looks like the star.

That is the real takeaway. Gemma 4 is not exciting because it is open. It is exciting because it makes local AI feel less like a compromise and more like a sensible default.

Install it. Throw a real repo at it. Run a tool-using workflow. See how much cloud you still need after that.

What is the Gemma 4 model and how good is it?

Gemma 4 is Google DeepMind’s newest open model family, with E2B, E4B, 26B A4B, and 31B variants built for reasoning, coding, multimodal tasks, and agentic workflows. Google publicly announced it on April 2, 2026, while the Gemma releases page lists the model release on March 31, 2026. Google also says the 31B model ranks #3 and the 26B model #6 among open models on Arena AI’s text leaderboard.

What are the VRAM requirements to run Gemma 4 locally?

For 4-bit GGUF local inference, the Gemma 4 26B A4B Q4_K_M file is listed at 16.8 GB, while the Gemma 4 31B Q4_K_M file is 19.6 GB. In practice, guides recommend about 16 to 18 GB total memory for 26B A4B and 17 to 20 GB for 31B, with extra headroom helping once context and KV cache grow. If you want the full model on GPU, aim for a little more VRAM than the raw file size.

Is Gemma 4 better than Qwen 3.5?

On coding and reasoning, Gemma 4 looks extremely competitive and often stronger at similar sizes. Google’s own numbers put Gemma 4 31B at 80.0 on LiveCodeBench v6 and 89.2 on AIME 2026, and recent comparison writeups place Gemma 4’s top models ahead of or directly challenging Qwen 3.5 in several public evaluations. The fair answer is that Gemma 4 is often the better pick for local coding and reasoning, while Qwen can still be attractive for some multilingual or speed-focused setups.

Is Gemma 4 truly open source?

Yes, in the way builders actually care about. Google released Gemma 4 under the Apache 2.0 license, and Google’s open source blog explicitly says it is the first Gemmaverse release under that OSI-approved license. That gives developers a familiar permissive framework for commercial use, modification, and deployment.

How does Gemma 4 handle the January 2025 knowledge cutoff?

Gemma 4 does not erase the cutoff internally. Google’s model card says the training data cutoff is January 2025. The practical workaround is tool use: the official Gemma 4 docs support function calling, which lets developers connect the model to live search, databases, APIs, or internal documentation and feed fresh information at runtime.