Most coding models are brilliant interns for about fifteen minutes. They race through the obvious fixes, produce something flashy, and then quietly run out of ideas. That has been the pattern for a while. Lots of demos. Lots of “agentic” marketing. Not a lot of evidence that the model can keep working once the first easy gains are gone.

GLM 5.1 is interesting because it seems built for the part after the demo. It does not just sprint through a repo and call it a day. GLM 5.1 is aimed at long-horizon software work, the kind where the first draft is rarely the real problem. The real problem is iteration. Benchmark. Debug. Rethink. Try again. Then do that another few hundred times without turning into a confused autocomplete engine.

That is why GLM 5.1 matters. It is not merely trying to be the best chatbot that can code. It is trying to be a model that can hold onto a goal long enough to make real engineering progress. In a market obsessed with vibe coding, GLM 5.1 makes a stronger claim. It wants to be useful when the work gets boring, messy, and expensive.

If that claim holds up, this is not just another model launch. It is a shift in what developers should expect from coding systems in the first place.

Table of Contents

1. The GLM 5.1 Shockwave

The easiest way to understand the release is this: the best llm for vibe coding is not automatically the best llm for building real systems. One is optimized for momentum and polish. The other is optimized for stamina. The main reason GLM 5.1 landed with so much force is that it framed the problem around endurance, not just first-pass cleverness.

Here is the full benchmark picture presented with the release.

| Benchmark | GLM 5.1 | GLM-5 | Qwen3.6-Plus | MiniMax M2.7 | DeepSeek-V3.2 | Kimi K2.5 | Claude Opus 4.6 | Gemini 3.1 Pro | GPT-5.4 |

|---|---|---|---|---|---|---|---|---|---|

| Reasoning | |||||||||

| HLE | 31.0 | 30.5 | 28.8 | 28.0 | 25.1 | 31.5 | 36.7 | 45.0 | 39.8 |

| HLE w/ Tools | 52.3 | 50.4 | 50.6 | – | 40.8 | 51.8 | 53.1* | 51.4* | 52.1* |

| AIME 2026 | 95.3 | 95.4 | 95.1 | 89.8 | 95.1 | 94.5 | 95.6 | 98.2 | 98.7 |

| HMMT Nov. 2025 | 94.0 | 96.9 | 94.6 | 81.0 | 90.2 | 91.1 | 96.3 | 94.8 | 95.8 |

| HMMT Feb. 2026 | 82.6 | 82.8 | 87.8 | 72.7 | 79.9 | 81.3 | 84.3 | 87.3 | 91.8 |

| IMOAnswerBench | 83.8 | 82.5 | 83.8 | 66.3 | 78.3 | 81.8 | 75.3 | 81.0 | 91.4 |

| GPQA-Diamond | 86.2 | 86.0 | 90.4 | 87.0 | 82.4 | 87.6 | 91.3 | 94.3 | 92.0 |

| Coding | |||||||||

| SWE-Bench Pro | 58.4 | 55.1 | 56.6 | 56.2 | – | 53.8 | 57.3 | 54.2 | 57.7 |

| NL2Repo | 42.7 | 35.9 | 37.9 | 39.8 | – | 32.0 | 49.8 | 33.4 | 41.3 |

| Terminal-Bench 2.0, Terminus-2 | 63.5 | 56.2 | 61.6 | – | 39.3 | 50.8 | 65.4 | 68.5 | – |

| Terminal-Bench 2.0, Best Self-Reported Harness | 69.0 (Claude Code) | 56.2 (Claude Code) | – | 57.0 (Claude Code) | 46.4 (Claude Code) | – | – | – | 75.1 (Codex) |

| CyberGym | 68.7 | 48.3 | – | – | 17.3 | 41.3 | 66.6 | – | – |

| Agentic | |||||||||

| BrowseComp | 68.0 | 62.0 | – | – | 51.4 | 60.6 | – | – | – |

| BrowseComp w/ Context Manage | 79.3 | 75.9 | – | – | 67.6 | 74.9 | 84.0 | 85.9 | 82.7 |

| τ³-Bench | 70.6 | 69.2 | 70.7 | 67.6 | 69.2 | 66.0 | 72.4 | 67.1 | 72.9 |

| MCP-Atlas Public Set | 71.8 | 69.2 | 74.1 | 48.8 | 62.2 | 63.8 | 73.8 | 69.2 | 67.2 |

| Tool-Decathlon | 40.7 | 38.0 | 39.8 | 46.3 | 35.2 | 27.8 | 47.2 | 48.8 | 54.6 |

| Vending Bench 2 | $5,634.41 | $4,432.12 | $5,114.87 | – | $1,034.00 | $1,198.46 | $8,017.59 | $911.21 | $6,144.18 |

The headline number is the obvious one. The GLM 5.1 benchmark result on SWE-Bench Pro is 58.4, ahead of Claude Opus 4.6 at 57.3 and GPT-5.4 at 57.7. That gap is not huge, but it is real. More importantly, the release argues that the benchmark lead is not the whole story.

What makes GLM 5.1 more interesting than a one-point leaderboard flex is the shape of its performance. The model is framed as a system that keeps extracting value from additional runtime, not one that peaks early and then just burns tokens.

2. Is GLM 5.1 The Best LLM For Agentic Coding?

If you want the short version, GLM 5.1 has a strong case for best llm for agentic coding right now, especially if your definition of “best” includes persistence, not just brilliance.

The best example is VectorDBBench. In the standard short-budget setting, models make a few familiar moves and then flatten out. In the longer optimization loop, GLM 5.1 kept going for 655 iterations and more than 6,000 tool calls, eventually reaching 21.5k QPS under a recall constraint that had tripped earlier attempts. That is not a cosmetic improvement. That is a model finding new gears.

The pattern is what Z.ai calls a staircase. Long stretches of tuning, then a structural jump. First IVF probing and f16 compression. Later, a two-stage search with u8 prescoring and f16 reranking. Then more routing and pruning changes. That sounds dry until you realize what it implies: the model is not just tweaking constants, it is changing strategy.

This is where GLM 5.1 separates itself from the usual wave of agent demos. Many systems look smart when they are harvesting easy wins from a clean setup. They look much less smart when the task starts demanding judgment, restraint, and repeated self-correction. That is why the Linux desktop demo matters, too. Eight hours is not impressive because eight is a big number. It is impressive because the model kept finding the next missing piece instead of congratulating itself after a half-built taskbar.

KernelBench adds some realism here. Claude still leads in that test at 4.2x speedup versus 3.6x for GLM 5.1. That matters. This is not a clean sweep. But it is exactly the kind of limitation that makes the whole release feel more credible. The story is not “we beat everybody at everything.” The story is “we pushed the productive horizon further than open models usually do.”

That is a bigger deal than it sounds.

3. Z.ai Coding Plan And GLM 5.1 Pricing

A great model can still be annoying in practice if the pricing is weird. That is the part buyers should read twice.

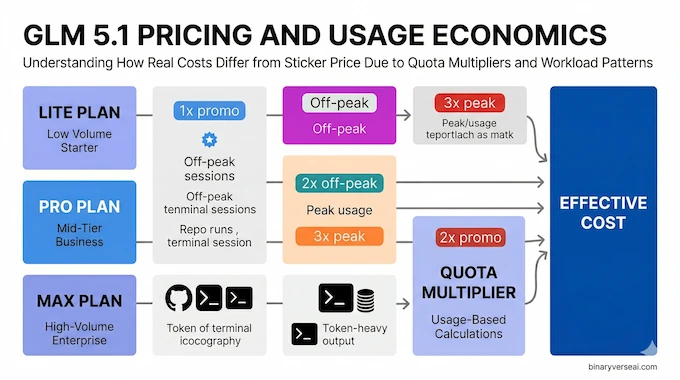

The awkward part of GLM 5.1 pricing is not the raw API rate. It is the quota multiplier. Z.ai says the model consumes quota at 3x during peak Beijing hours and 2x off-peak, with a temporary off-peak promotion at 1x through the end of April. That means the sticker price alone does not tell you what a real workday costs.

Here is the useful summary.

| Option | Price | Best For | Catch |

|---|---|---|---|

| Lite Plan | $27 / quarter | Lightweight coding workloads | Lower speed, lower quota |

| Pro Plan | $81 / quarter | Heavier project work | More expensive, still quota-based |

| Max Plan | $216 / quarter | High-volume users | Built around guaranteed peak-hour performance |

| API Input | $1.40 / 1M tokens | Agent workflows, integrations | Real cost depends on quota multiplier |

| API Output | $4.40 / 1M tokens | Production coding runs | Output-heavy sessions add up fast |

| Cache Discount | $0.26 / 1M input tokens | Repeated contexts | Only helps specific workloads |

This is where the z.ai coding plan becomes either a bargain or a trap, depending on how you work. If you run long supervised sessions off-peak, it can look very attractive next to top-end Western models. If your team tends to hammer agents during shared daytime windows, the effective cost shifts.

That said, Z.ai at least seems to understand the market it is chasing. The plans are not pitched as general chat subscriptions. They are sold like tooling for developers who actually use terminals, repos, and harnesses. That is the right framing.

4. The Best Workflow Is Not Monogamy

A lot of developers quietly converge on the same pattern. Use one model for planning, another for execution.

That is why the “dream team” workflow makes sense. Use Claude or another strong planner to write the project brief, system constraints, repo map, and acceptance criteria. Then hand execution to the model that is happiest living in a loop of code, tools, logs, and retries.

For many people, that may be the best llm for vibe coding on one side, and GLM 5.1 on the other for the grind. That split sounds unromantic. It is also practical.

A simple version looks like this:

- Write a strict SYSTEM.md with architecture, constraints, and non-goals.

- Break the work into testable milestones.

- Let the execution model operate inside a harness like Claude Code or OpenClaw.

- Re-review after each meaningful checkpoint, not after every token burst.

This matters because GLM 5.1 seems strongest when the task is concrete, instrumented, and allowed to run. Give it a repo, a shell, a measurable target, and enough leash to iterate. Do not ask it to be your product manager, designer, staff engineer, and mascot at the same time.

5. Can You Run GLM 5.1 As The Best Local LLM For Coding?

This is where the internet gets a little too optimistic.

Can GLM 5.1 be the best local llm for coding? In spirit, maybe. In practice, not for most people. It is a 754B Mixture-of-Experts model. That is not a cute little weekend download. That is infrastructure.

The good news is that the weights are open under MIT, and the model supports frameworks like vLLM and SGLang. The better news, for normal humans with finite electricity bills, is that open release matters even if you never run the full thing at home. Open weights create downstream tooling, distillations, quantization efforts, and community experiments. That ecosystem effect is often more important than the fantasy that everyone is about to host a frontier model under their desk.

So no, most people are not running GLM 5.1 fully local today. But the release still matters to the local community because it expands what can be adapted, studied, and rebuilt.

6. GLM 5.1 Vs MiniMax 2.7, And Why That Debate Misses The Point

The glm 5.1 vs minimax 2.7 discussion is real, but it can also be a little shallow. Budget models matter. Fast models matter. Cheap models matter. But once the task becomes long-horizon engineering, the question changes.

You are no longer asking which model writes a decent patch fastest. You are asking which model can survive ambiguity, recover from mistakes, and keep improving after the first idea stops working.

On that dimension, GLM 5.1 looks like the more serious engineering system. MiniMax may remain attractive where cost and speed dominate. Claude may still be the model people trust for cleaner early architecture. GPT-class systems still have strength in some harnesses and broader reasoning mixes. None of that disappears.

But the center of gravity shifts when the work is iterative and tool-heavy. That is where GLM 5.1 stops looking like just another strong coding model and starts looking like a model with a different operating philosophy.

7. The Real Risk, Hallucinations Over Long Runs

The obvious fear with long-running agents is not raw incompetence. It is drift.

A model can look fine for twenty minutes and then slowly turn into a confident improviser with root access. That is why the strongest part of the release is not simply “eight hours.” It is the claim that additional runtime can stay useful. That usefulness depends on self-evaluation, constraints, and environment design.

This is also where skepticism is healthy. A model that can self-correct in benchmark settings can still produce nonsense in open-ended work if the harness is weak, the prompts are vague, or the objective is mushy. Long-horizon ability is only valuable when the loop has discipline.

So treat the release as a capability increase, not a permission slip to stop supervising.

8. Final Verdict, A Better Standard For Coding Models

The strongest thing about GLM 5.1 is not that it wins every chart. It does not. The strongest thing is that it pushes the conversation away from one-shot cleverness and toward sustained engineering effort.

That is the right direction.

We have spent too much time judging coding models like magic tricks. Can it make a game in one prompt. Can it patch a bug in ten minutes. Can it output a shiny demo before the audience gets restless. Those are fun tests. They are not the job.

The job is staying coherent when the repo gets ugly. The job is noticing that your first plan was wrong. The job is benchmarking, revising, and pushing through the dull middle where real software gets made. GLM 5.1 looks closer to that world than most of its peers.

So here is the practical takeaway. Do not judge this model by the launch video alone. Put it in a real harness. Give it a messy codebase. Give it tests. Give it a concrete target. Then see whether it still earns your trust four hours later. You can also download GLM 5.1 weights directly from Hugging Face to start experimenting.

That is the bar now. And if you care about serious agentic engineering, that is exactly where you should start.

Is GLM 5.1 better than Claude Opus 4.6 for coding?

For autonomous coding, GLM 5.1 currently has the stronger headline case. Z.AI says it scored 58.4 on SWE-Bench Pro, ahead of Claude Opus 4.6 at 57.3, and positions it as stronger on sustained long-horizon execution. For early architecture thinking and polished writing, some comparison coverage still gives Claude an edge, so the real split is execution stamina versus refinement quality.

Is the Z.ai Coding Plan worth the price?

Yes, if you mainly use coding agents and can manage quota carefully. Current plan pricing starts at $27 per quarter for Lite and official docs say all plans support GLM-5.1, but Z.AI also says GLM-5.1 usage is deducted at 3× during peak hours and 2× during off-peak hours, which can change the value fast if you run long sessions at the wrong time.

Can I run GLM 5.1 locally on my computer?

Yes, but “local” means serious hardware today, not an average laptop. Z.AI’s GitHub and vLLM deployment docs show local serving support through vLLM, SGLang, xLLM, and KTransformers, while the published FP8 example is aimed at 8x H200 or H20 GPUs with 141GB each, which puts full-fat local deployment in workstation or server territory.

How does GLM 5.1 compare to MiniMax M2.7?

GLM 5.1 looks stronger overall for agentic coding, while MiniMax M2.7 looks better on price. The published benchmark tables place GLM-5.1 at 58.4 on SWE-Bench Pro versus MiniMax M2.7 at 56.2, and community comparison pages keep framing GLM-5.1 as the stronger benchmark and workflow pick, with MiniMax as the cheaper alternative.

Was GLM 5.1 really trained without Nvidia GPUs?

Treat that claim carefully. I found strong secondary reporting that the GLM-5 family was trained on Huawei Ascend 910B hardware without Nvidia, while the primary GLM-5 technical report I found clearly discusses adapting GLM-5 to Chinese chip platforms including Huawei Ascend. I did not find the same full no-Nvidia claim stated as plainly on the current GLM-5.1 overview page, so for credibility I would phrase it as widely reported for the GLM-5 family, not as a fully settled GLM-5.1 fact unless you add a primary source in the article body.