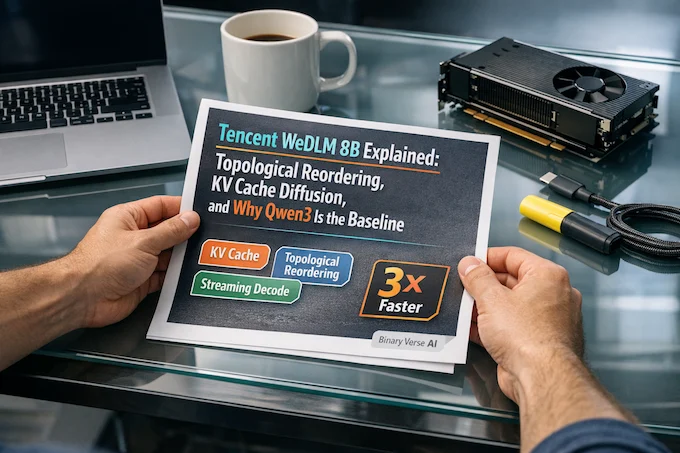

Tencent WeDLM 8B Explained: Topological Reordering, KV Cache Diffusion, and Why Qwen3 Is the Baseline

Watch on YouTube Tencent WeDLM 8B: Topological Reordering & KV Cache Diffusion Introduction Speed claims are cheap. Latency is not. Anyone can make a language model “faster” by picking an easy prompt, a short output, and a baseline that was never tuned. The harder problem is shaving seconds off the stuff people actually wait on. … Read more