Introduction

This week feels like someone turned the difficulty slider up on the whole field. Models are learning to reason longer without getting slower, chips are getting rebuilt as full racks instead of parts, and “memory” is moving from a hacky add-on to a first-class skill. That mix raises the baseline, for builders and for failure modes.

Welcome to AI News January 10 2026, a weekly sweep that keeps the hype on a leash while still enjoying the fireworks. You will see AI updates this week across research, silicon, New AI model releases, products, and policy, plus a few stories where AI touches the physical world in a very human way.

If there is one through-line in AI News January 10 2026, it is interface design. Between layers in a network. Between an agent and its memory. Between a GPU and its network fabric. Between a person and their inbox or health records.

The Pattern In Three Moves

- Compute is becoming a system, not a chip, rack-scale co-design is now the product.

- Agents are becoming managers of tools and memory, not just talkers with long prompts.

- Evaluation is getting meaner, calibration and long-context robustness are the new stress tests.

Table of Contents

1. Deep Delta Learning Rewires ResNets With Learnable Reflections

Residual connections made deep nets trainable, and also snuck in a bias. If every layer can only add a bit, the network learns smooth accumulation, not sharp reversals. Deep Delta Learning, one of the sharper New AI papers arXiv this week, tweaks the shortcut with a learnable “delta operator” so the skip path can preserve, erase, or flip features along a learned direction.

The charm is its tiny control knob. A rank-1 perturbation with a single gated scalar can slide from identity to projection to reflection, while keeping the stability ResNets are loved for. It is architecture-as-geometry, and geometry decides which dynamics your model can express.

2. IQuest-Coder-V1 Pushes Autonomous Coding With Code-Flow Training And 128K Context

In AI News January 10 2026, the most interesting coding move is training on change, not snapshots. IQuest-Coder-V1 learns “code-flow,” commits, refactors, and the messy reality of software evolving under pressure. That aligns with agentic SWE work, and it shows up in strong results on SWE-Bench Verified and LiveCodeBench, two benchmarks that punish shallow autocomplete.

The family splits into Thinking and Instruct variants, plus Loop models that reuse parameters across iterations. With native 128K context, it can hold repo-scale state for reviews and refactors. Open source AI projects like this raise the bar for autonomous coding workflows.

3. Recursive Language Models Treat Prompts Like Executable Environments

Long context keeps getting sold as a bigger bucket. Recursive Language Models flip the framing. They park the whole prompt outside the transformer, then let the model write small programs to inspect it, slice it, and recursively call itself on subproblems, like a REPL for text.

That out-of-core mindset scales to inputs in the millions of tokens and holds up on long-context tests like needle search and OOLONG-style reasoning, without the usual context rot. The deeper lesson is procedural. Instead of feeding a model everything, you teach it to navigate information, which is how humans survive large codebases and long documents.

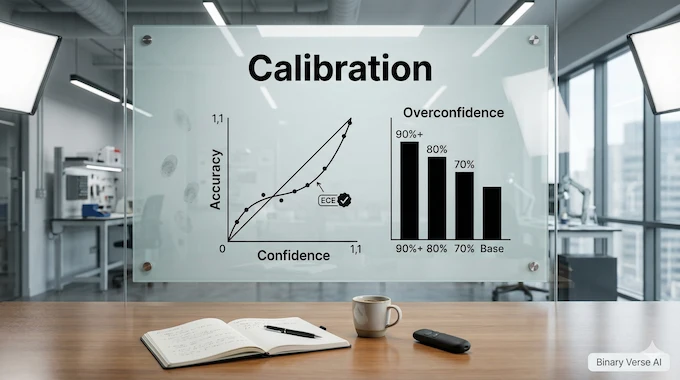

4. KalshiBench Exposes Overconfidence In Forecasting

KalshiBench asks a brutal question. When the future is genuinely unknown, do models admit uncertainty, or do they cosplay as oracles? By using prediction-market questions that resolve after training cutoffs, the benchmark blocks memorization and forces real epistemic calibration across politics, sports, finance, and climate.

The results sting. Frontier models stay overconfident even above 90 percent stated confidence, and calibration varies wildly despite similar accuracy. Metrics like Expected Calibration Error and Brier scores expose the gap. One model can be “right enough” and still be dangerously sure. For AI world updates headed into finance and medicine, this is the warning label we needed.

5. Falcon-H1R-7B Shows Small Models Can Reason Deeply

Falcon-H1R-7B is a small model with a big ambition: buy reasoning with smarter inference, not bigger pretraining. It blends Transformers with Mamba2-style sequence modeling, then does cold-start fine-tuning on long reasoning traces before reinforcement learning pushes better test-time thinking and longer, structured outputs.

The fun part is the economics. With deliberate compute at inference time, it competes with much larger systems on math and logic benchmarks, and it can generate extremely long solutions when needed, up to tens of thousands of tokens. This is a clean example of AI Advancements that scale by computation, not just parameters.

6. NVIDIA Rubin Turns The Rack Into The Product

In AI News January 10 2026, NVIDIA’s Rubin story is not a single GPU, it is the rack as a product. Think NVL72 racks and HGX server variants built from Vera CPU, Rubin GPU, NVLink 6, SuperNICs, DPUs, and Ethernet switches. The point is co-design, so networking and security stop being afterthoughts and start being performance features.

NVIDIA is pitching big economics, up to a 10x drop in inference token cost versus Blackwell, plus fewer GPUs for MoE training. The sneakier idea is “context memory” at the datacenter level. Sharing and reusing key-value caches across multi-turn sessions targets the real bottleneck in Agentic AI News workloads.

7. NVIDIA Alpamayo Opens A Reasoning VLA Stack For Autonomy

Autonomous driving fails in the long tail, the weird edge cases you did not train for. Alpamayo is NVIDIA’s bet that reasoning-based vision-language-action models can handle that tail better and explain decisions step by step. That is a direct hit on the debugging problem that haunts AV teams.

What makes it credible is the open loop. Open weights for a teacher VLA model, an open-source simulator, and open datasets with thousands of hours and rare scenarios. Train, stress-test, find failure patterns, then iterate. It is an “open ecosystem” move, and open source AI projects often win by making iteration cheap. What we cover in AI News January 10 2026, you can read full reviews by clicking following links.

8. SleepFM Reads One Night Of Sleep As A Health Forecast

SleepFM, published in Nature Medicine, treats polysomnography like a language and learns embeddings that predict disease risk from a single night. The scale is wild, more than half a million hours of sleep data across tens of thousands of people, with a channel-agnostic design that survives missing sensors across hospitals.

The output goes beyond sleep staging and apnea detection. A self-supervised objective aligns modalities, then the model forecasts risk across a huge spread of future conditions, including cardiovascular and neurological outcomes, and generalizes to unseen cohorts. The quiet point is big. Rich biosignals are not just diagnostics, they are early-warning telemetry for the body.

9. ChatGPT Health Becomes A Private Workspace For Medical Context

In AI News January 10 2026, OpenAI news shifts from “chat” to “organize.” ChatGPT Health is a separate, privacy-hardened workspace where you can ground conversations in real labs, records, and wearable data, without that information leaking into your normal chats or memory.

The engineering choice that matters is compartmentalization. Health data is isolated, encrypted, and not used to train foundation models, and the experience was shaped with heavy clinician feedback and HealthBench-style evaluation. The product pitch is simple. Healthcare is scattered across portals and PDFs, and people want a plain-language navigator before they see a real medical doctor.

10. Gemini Brings A Proactive Inbox To Gmail

Gmail is turning into a briefing surface. Gemini can summarize long threads, answer natural-language questions about your inbox, and help you draft replies that match the tone of the conversation. It is email as a searchable knowledge base, not a pile of messages you dread opening.

The shift is bigger than convenience. Your inbox is a database of receipts, decisions, and deadlines, and AI makes it queryable. Features like Help Me Write, smarter suggested replies, and a forthcoming AI inbox view push Gmail toward “next action” mode. Done well, it saves hours. Done poorly, it hallucinates commitments. The hard work is trust engineering, showing what the model used, constraining output, and letting users verify the underlying message text.

11. AMD’s Ryzen AI Push Makes Copilot+ PCs Feel Real

In AI News January 10 2026, AMD shows what “AI PC” means when it lands in shipping silicon. Ryzen AI 400 and PRO 400 hit up to 60 TOPS on-device, while Ryzen AI Max+ pushes high AI throughput and big unified memory into thin machines and compact workstations.

The sleeper win is software and tooling. ROCm support across Windows and Linux, plus tighter integration into creator and local-AI workflows, reduces the friction from “I own hardware” to “I can run models.” Add Ryzen AI Halo mini-PCs aimed at developers and ML-driven FSR upgrades for gaming, and AMD is building a full-stack identity, not just a chip line.

12. AI Search And Rescue Finds A Mountaineer From A Single Red Pixel

A missing person case in the Alps turned into a data problem. Drones captured thousands of high-resolution images, and an AI scanner flagged anomalies in color and texture that humans miss after hours of fatigue. One tiny red pixel led rescuers to a helmet, and to closure months later.

This is a grounded kind of artificial intelligence breakthroughs. It compresses search time in terrain where every minute costs money and risk. It also comes with real limits. Rock patterns and vegetation trigger false positives, and aerial analysis raises privacy questions. The best deployments treat AI as triage, with humans making the final call.

13. Boston Dynamics Atlas Moves From Demo To Production

Atlas is no longer a research flex. Boston Dynamics is shipping a production-ready, fully electric humanoid built for factories, with fleet tooling through Orbit and integration hooks for warehouse and manufacturing systems. Early deployments are booked for 2026, which is the closest thing robotics has to product-market proof.

The interesting bit is the scaling story. With high dexterity, large reach, and industrial payloads, Atlas is built for repetitive work at scale, and it can handle charging and battery routines with minimal babysitting. Pair that with Google DeepMind news about training with foundation models, and “teach once, clone across a fleet” starts to sound real.

14. Anthropic’s Mega-Round Hints At A New Capital Cycle

In AI News January 10 2026, the capital meter swings hard. Reports suggest Anthropic is exploring a mega-round that could value the company like strategic infrastructure, not a normal startup. The number matters less than the signal, investors are treating frontier labs as default platforms for enterprise workflows.

This also reshapes the competitive game. Model quality is table stakes, and compute access, distribution, and developer ecosystems become the differentiators. A giant private raise sets up an IPO narrative and pressures rivals to match the pace. If you want to understand the next “supercycle,” follow where the money is being forced to land.

15. MiniMax IPO Pop Shows Consumer AI Still Sells

MiniMax’s Hong Kong debut, and the first-day surge, reads like the market trying to price consumer AI adoption in real time. Investors love a clean story. Multimodal models, consumer apps people actually use, and a pipeline that looks like entertainment plus utility.

The detail to watch is product gravity. Tools like Hailuo AI for video generation and character-driven chat apps make adoption feel less like “work software” and more like daily habit. Hong Kong also looks like the launchpad for Chinese AI listings, which keeps the public-market feedback loop alive. The risk stays the same, consumer hype moves fast, retention is the truth serum.

16. Trump Frames AI As Jobs And A Race To Win

Trump is selling AI as an economic boom and a geopolitical contest, and he is signaling that regulation should not slow the rollout. The framing is jobs, growth, speed, and a “race to win,” paired with a shrug toward risks like cyber misuse and social harm.

The tension is structural. Trust, security, and accountability introduce friction, and competition narratives remove friction. Reports also point to a push for uniform federal control over state-by-state rules, which is exactly the kind of AI regulation news that decides who carries liability when systems fail. The next year will test whether speed wins, or whether the first big incident rewrites the script.

17. xAI’s Series E Funds Grok 5 And More Compute

xAI raised a massive Series E and framed it as a compute-to-product pipeline. Money becomes GPUs, GPUs become faster training, training becomes Grok releases, and distribution comes from being wired into X and even Tesla vehicles. It is the modern “full stack lab” playbook.

The infrastructure brag is the point. xAI talks about Colossus-scale clusters and GPU equivalents as the moat, plus fast iteration across Grok models, voice, and image tooling. The open question is durability. Can usage stay sticky as open models improve and rivals scale too? Either way, it is one of the Top AI news stories because it keeps the arms race hot.

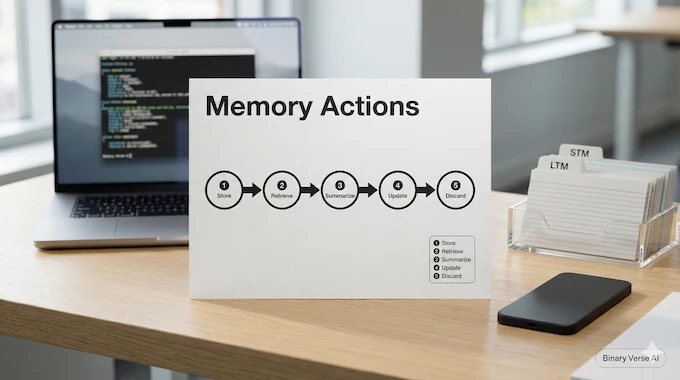

18. Agentic Memory Trains Agents To Remember On Purpose

In AI News January 10 2026, the least flashy paper might be the most practical. Agentic Memory argues long-horizon agents fail because they do not manage memory as a skill. AgeMem turns memory operations, store, retrieve, summarize, update, discard, into explicit actions the agent learns through reinforcement learning instead of brittle rules.

Training matters here. A progressive, three-stage setup teaches long-term storage, then short-term context control, then coordination under full tasks, with a GRPO-style objective to make sparse memory rewards learnable. If this holds, “memory management” becomes a core agent capability, not a bolt-on prompt trick.

Where This Leaves Us

The week’s AI News rhymes. We are watching systems become more deliberate, the model explores the prompt instead of swallowing it, the agent chooses what to remember, the datacenter chooses what to reuse. Hardware and software are meeting in the middle, and products are getting personal, inboxes, health, and work all in the loop.

That’s AI News January 10 2026, and it is a good moment to pick a thread and build something small. Try a long-context task, a calibration check, or a memory policy loop, then share what breaks. If you want more AI news this week January 2026 and Top AI news stories in your inbox each week, subscribe, and send me the weirdest arXiv link you found.

- https://arxiv.org/abs/2601.00417

- https://huggingface.co/IQuestLab/IQuest-Coder-V1-40B-Instruct

- https://arxiv.org/abs/2512.24601

- https://arxiv.org/pdf/2512.16030

- https://huggingface.co/tiiuae/Falcon-H1R-7B

- https://nvidianews.nvidia.com/news/rubin-platform-ai-supercomputer?ncid=no-ncid

- https://nvidianews.nvidia.com/news/alpamayo-autonomous-vehicle-development

- https://www.nature.com/articles/s41591-025-04133-4

- https://openai.com/index/introducing-chatgpt-health/

- https://blog.google/products-and-platforms/products/gmail/gmail-is-entering-the-gemini-era/

- https://www.amd.com/en/newsroom/press-releases/2026-1-5-amd-expands-ai-leadership-across-client-graphics-.html

- https://www.bbc.com/future/article/20260108-how-ai-solved-the-mystery-of-a-missing-mountaineer

- https://bostondynamics.com/blog/boston-dynamics-unveils-new-atlas-robot-to-revolutionize-industry/

- https://www.reuters.com/technology/anthropic-plans-raise-10-billion-350-billion-valuation-wsj-reports-2026-01-07/?utm_source=chatgpt.com

- https://www.reuters.com/world/asia-pacific/china-ai-firm-minimax-set-surge-hong-kong-debut-2026-01-09/

- https://www.nytimes.com/2026/01/08/us/politics/trump-artificial-intelligence.html

- https://x.ai/news/series-e

- https://arxiv.org/abs/2601.01885

AI News January 10 2026: What is NVIDIA Rubin, and why does it matter?

Rubin is NVIDIA’s rack-scale “one AI supercomputer” platform that co-designs CPU, GPU, NVLink, networking, and security to cut training time and reduce inference token costs. The real story is economics: it targets multi-turn agentic workloads where context and networking become the bottleneck, not just raw FLOPs.

AI News January 10 2026: What’s new about ChatGPT Health vs regular ChatGPT?

ChatGPT Health is a dedicated, separated space for health and wellness workflows, built to ground chats in your connected records and apps while keeping that data compartmentalized. The key promise is privacy boundaries plus practical outputs, like summaries, trend spotting, and doctor-visit prep, not diagnosis.

AI News January 10 2026: Why is SleepFM a big deal for medical AI?

SleepFM is a multimodal foundation model trained on large-scale polysomnography data to learn general sleep representations that transfer across tasks. The headline is forecasting from a single night’s signals, aiming to predict future disease risks years earlier than typical clinical detection, and generalizing across cohorts.

AI News January 10 2026: How do Recursive Language Models beat context limits?

Recursive Language Models move long prompts out of the model’s context window and into an “executable environment” the model can query. Instead of stuffing everything into one prompt, it programmatically slices, inspects, and recursively calls itself over relevant parts, enabling long-horizon reasoning at far larger effective context sizes.

AI News January 10 2026: Why did MiniMax’s IPO pop matter for AI markets?

MiniMax’s debut is being read as a signal that public markets will pay up for consumer AI narratives, especially when products show visible adoption. It also highlights Hong Kong’s role as a funding venue for Chinese AI labs, and how “AI tiger” listings are becoming sentiment barometers for the sector.