Introduction

A funny thing happened after ChatGPT shipped. The average paper got easier to read. Not “more correct,” not “more insightful.” Just smoother. The kind of prose that used to signal a careful researcher suddenly became the default setting.

That’s why the “AI slop” panic landed so hard in academia. If the writing is effortless, what’s left to trust?

Kusumegi and colleagues went after that question with something better than vibes. They analyzed large-scale data from three major preprint repositories, spanning January 2018 to June 2024, and asked what changes when authors start using large language models in their manuscripts.

Their summary is crisp: output accelerates, barriers fall for non-native English speakers, and engagement with prior literature broadens. At the same time, the old trick of judging work by how “good” it sounds stops working, right when volume spikes.

If you’re working in AI in scientific research, you’ve already felt this shift in small ways. Your collaborator sends a draft that reads like a journal article, even though it’s a Monday morning preprint. A student produces a decent related-work section in an afternoon. A reviewer tells you the paper is “well-written” but can’t articulate the contribution. The surface area of competence got bigger.

This post translates the results into a practical, time-respecting guide. The punchline is simple. AI in scientific research is democratizing participation. Discovery still requires rigor, and the scarce resource is trust.

Table of Contents

1. AI In Scientific Research Is Exploding Output, But Output Is Not Discovery

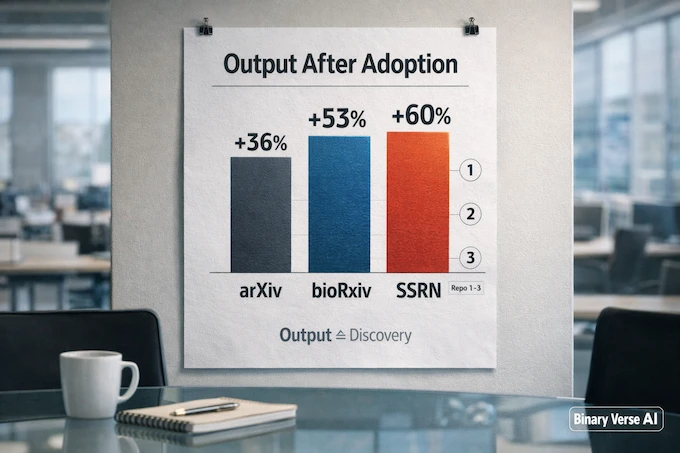

The headline number is real. After adoption, manuscript output rises across repositories. The estimated uplifts are 36.2% for arXiv, 52.9% for bioRxiv, and 59.8% for SSRN.

That doesn’t mean breakthroughs rose by 59.8%. It means the pipeline got wider, and AI in scientific research moved from a novelty to a macro factor.

1.1 Three Metrics People Confuse

- Paper output: count of manuscripts.

- Research productivity: experiments, code, datasets, analysis.

- Discovery productivity: ideas that survive replication and matter to someone else.

These tools can move the first metric fast. The job for the rest of us is to make sure the second and third keep up.

AI in scientific research signals

| What The Study Shows | What It Does Not Automatically Prove | Why You Should Care |

|---|---|---|

| Output rises after LLM adoption across arXiv, bioRxiv, SSRN | “More papers” equals “more discovery” | A wider pipeline strains review and raises the value of good filters |

| Gains are larger for groups likely facing higher writing costs | Everyone benefits equally | Lower writing friction can shift who gets to participate |

| Writing polish becomes a weaker or inverted quality signal | Writing no longer matters | Prose stops being proof of rigor, methods and verification take over |

| Literature discovery and citation patterns broaden | AI always reinforces the canon | Better search can widen attention toward books and newer work |

On mobile, swipe left and right inside the table to see all columns.

2. The Hard Data, What The Study Actually Measured

A lot of discourse about AI in science floats on anecdotes. This study is a welcome change.

In the main article, they pull from arXiv (1.2M preprints), bioRxiv (221K), and SSRN (676K) across 2018 to mid-2024. In the supplementary material they describe arXiv’s collection and the decision to exclude core AI categories, so the “AI boom” doesn’t swamp the “AI tool effect”.

They detect LLM use through statistical signatures in abstracts, trained by comparing pre-2023 human abstracts with GPT-3.5 rewrites. It’s not a courtroom proof of authorship. It’s a macro sensor that lets you ask, at scale, how LLM assistance correlates with output and behavior.

3. Why This Still Counts As Democratization, Even If It Starts With Writing

It’s easy to sneer at “writing help” as if it’s cosmetic. In practice, writing is the tax you pay to join the conversation. If the tax drops, more people join.

The authors explicitly argue that LLMs reduce the cost of writing in a second language and that the productivity effects are large enough to shift scientific production toward non-native English geographies. That’s democratization in the literal sense, and it’s one of the most concrete ways AI in scientific research shows up in the real world.

3.1 The Uneven Boost Is The Signal

For Asian-named scholars in Asian institutions, the estimated productivity gain ranges from 43.0% (arXiv) to roughly 89% (bioRxiv and SSRN). This is not “AI makes everyone slightly faster.” It’s “AI removes a bottleneck that hit some people harder than others.”

If you care about AI in science and technology as a societal story, this matters more than the thousandth debate about whether a model “understands.” AI in scientific research is changing who gets to speak fluently in the lingua franca of journals.

4. The Under-Hyped Win, AI Changes What Researchers Read

Most people talk about generation. The paper’s sneaky contribution is about discovery.

They exploit the February 2023 launch of GPT-4 powered Bing Chat as a natural experiment for AI-assisted search. Compared with Google-referred accesses, Bing users accessed books at a 26.3% higher rate, and the median age of accessed works dropped by about 0.18 years.

Those are small nudges with big consequences. In AI in scientific research, what you read shapes what you try. If your search tool reliably surfaces books, surveys, and “forgotten but useful” work, you spend less time reinventing wheels and more time arguing about the right wheels.

5. The “AI Slop” Objection, Why Fancy Writing No Longer Signals Quality

Now the uncomfortable part. Historically, many fields used writing as a crude filter. Not “good writing” in the literary sense, more like “this person seems to understand their own experiment.”

Kusumegi et al. show that breaks. For LLM-assisted manuscripts, increases in writing complexity correlate with lower peer assessments of merit, the relationship flips relative to human-written papers.

So yes, AI in scientific research can manufacture the surface cues we used to trust.

Here’s a practical way to think about it. Before, a paper had to spend effort in two places, do the work, and explain it well enough to survive review. Now explanation got cheaper. That doesn’t automatically make the work worse. It does mean reviewers can’t lean on prose as a shortcut.

The authors don’t mince words about the systems risk. If polished language becomes cheap, a deluge of superficially convincing but scientifically underwhelming work can saturate the literature and waste valuable time. That’s the AI slop fear, translated into peer review economics.

6. If Prose Is Cheap, What Replaces It As A Trust Signal

AI in scientific research forces a swap. We stop using language as a proxy for effort, and we start using auditability as a proxy for truth.

Here are trust signals that scale and don’t depend on your writing style.

6.1 The New Proxies For Seriousness

- Methods transparency: enough detail that another lab can try to break your claim.

- Data and code availability: not “available upon request,” available as a runnable bundle.

- Claim-to-evidence alignment: every headline sentence maps to a figure, table, or checked citation.

- Disclosure and provenance: where AI helped, where humans checked, and what was verified by hand.

This is the quiet upside. It pressures the ecosystem to reward the things we should have rewarded all along.

7. Hallucinations, Fake References, And AI Citations You Can’t Debug

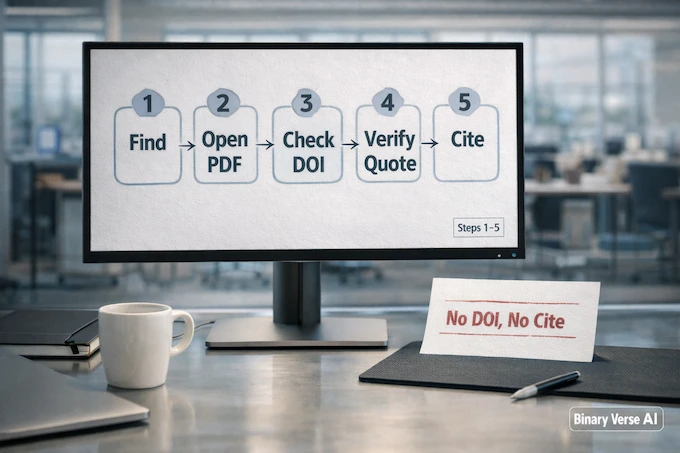

If you’ve ever chased a reference that doesn’t exist, you already understand the failure mode. AI citations can be confident, well-formatted, and wrong. The fix is procedural, and it needs to be normal, not heroic.

In AI in scientific research, citations are not decoration. They’re executable links to prior claims. If the links are fake, the paper becomes a dead end.

7.1 A Reference Workflow That Survives Speed

- No DOI or identifier, no cite.

- Verify title and authors in a trusted index before it enters your reference manager.

- Open the source for key claims. If a reference is load-bearing, read the actual page.

- Treat model suggestions as leads. Use them to broaden search, not to settle arguments.

- Keep a “verification ledger.” One shared note that records which AI citations were confirmed, which were dropped, and why.

This feels fussy until you do it once. Then it becomes the fastest way to work, because you stop re-debugging the same broken references across drafts and co-authors.

8. Peer Review Is The New Bottleneck

More output with the same reviewer pool is not a mild inconvenience, it’s structural strain. In AI in scientific research, the writing bottleneck is easing, so the review bottleneck becomes painfully visible.

The study’s warning about literature saturation points straight at peer review overload. The tricky part is that LLM-assisted submissions can look “review-ready” even when the underlying work is thin.

8.1 What AI Peer Review Can Actually Be

You’ll hear two extremes: “AI peer review will replace reviewers,” and “AI peer review is useless.” Both miss the point.

AI peer review, done responsibly, is triage and hygiene. An AI peer review tool can:

- flag missing methods details

- check consistency between claims and figures

- surface statistical red flags

- standardize checklists so humans spend time on substance

An AI peer review tool cannot decide whether a result matters, or whether a mechanism is plausible. That’s still human territory, and in AI in scientific research, it’s the territory that’s about to get more valuable.

9. Who Benefits Most, The New Advantage Stack

The heterogeneity results suggest a clear story: the biggest gains accrue where writing cost was highest. That’s why the democratization claim isn’t a slogan. It’s measurable.

So the new advantage stack in AI in scientific research is not “who has the fanciest prompts”. It’s:

- who can verify fastest

- who can package reproducible artifacts

- who can read widely without drowning

- who can maintain taste under volume

If you’re hiring, mentoring, or training students, aim them at this stack. It’s the durable part.

10. What Institutions Should Do Next

The authors call out that science policy has to evolve as the production process evolves. In plain terms, if institutions keep rewarding volume, AI in scientific research will deliver volume. If institutions reward auditability, AI in scientific research will deliver better trust.

A reasonable institutional checklist:

- require AI usage disclosure in submissions

- require reproducibility artifacts when feasible

- adopt structured review forms with explicit gates

- fund replication and dataset work as first-class outputs

This is not anti-AI. It’s pro-science.

11. A Practical Workflow, Using AI In Scientific Research Without Producing Slop

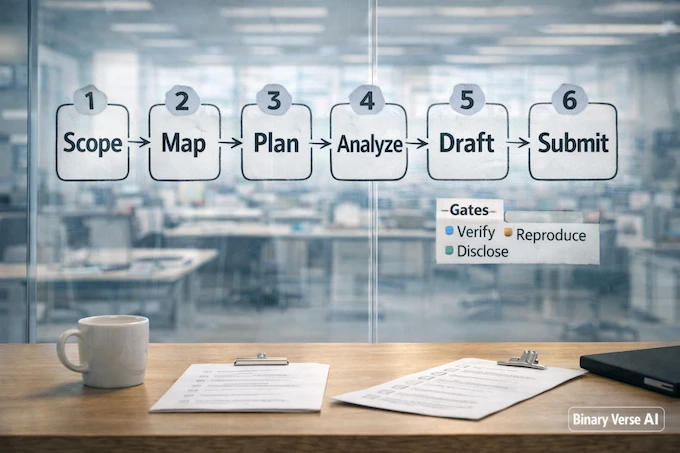

Here’s the bookmarkable version. Use the model where it expands your search space, and put gates where truth matters. This is the difference between AI in scientific research as leverage, and AI in scientific research as noise.

AI in scientific research workflow

| Stage | Use AI For | Gate Before You Move On |

|---|---|---|

| Scoping | alternative hypotheses, confounders, baselines | you can state the falsifiable claim in one sentence |

| Literature mapping | keyword expansion, clustering, book leads | every key citation is verified, not generated |

| Planning | protocols, prereg drafts, sanity checks | stopping criteria and evaluation metrics are explicit |

| Analysis | code scaffolds, test ideas, error hunting | results reproduce from a clean environment |

| Drafting | clarity, structure, second-language polish | claims match figures and methods match reality |

| Submission | checklist creation, reviewer Qs, limitation summaries | a colleague can rerun the core result and validate references |

On mobile, swipe left and right inside the table to see all columns.

11.1 Two Habits That Pay Off Immediately

- Run an “adversarial pass.” Ask the model to attack your paper as a skeptical reviewer. Then answer those critiques with evidence, not rhetoric.

- Separate “draft speed” from “truth speed.” Let the draft move fast. Make verification non-negotiable and visible.

These habits are boring, which is why they work. They also scale cleanly to teams doing AI in science research across disciplines.

12. The Real Takeaway, Democratization Is Real, Trust Is The Scarce Resource

Before we get carried away, the paper is clear about limitations. Their detection is imperfect, it relies on abstracts, it can’t identify which co-author used the model, adoption is nonrandom, and adoption timing can intertwine with productivity.

So don’t read this as “LLMs cause exactly 52.9% more biology.” Read it as: AI in scientific research is already correlated with a large shift in output and behavior, and the direction is stable enough that we should redesign our trust systems now.

That’s the core of the AI in scientific research moment. Words got cheaper. Trust did not.

If you’re a researcher, the move is not to avoid AI in scientific research. The move is to use it, and to become the person whose work is easiest to verify. Treat AI citations as leads. Use AI peer review as linting. Make your methods and artifacts boringly reproducible.

If you’re building tools, build for verification, not verbosity.

And if you’re a reader, reward the papers that show their work.

Next step: pick one project this month and implement the workflow table in Section 11. Share it with your lab or your students. Run a “no DOI, no cite” policy for one week. You’ll ship faster, you’ll dodge more mistakes, and you’ll help push AI in scientific research toward the part we actually want, more real discovery, less convincing noise.

Is AI good at science?

Yes, when “good” means speeding up the workflow in AI in science and AI in science research, literature mapping, drafting, coding, and pattern-finding. The hard part is truth. You still need verification, grounded methods, and real experiments to turn fluent text into reliable science.

What is AI slop in scientific research?

AI slop is high-volume, high-fluency output with low trust. In AI in scientific research, the risk is papers that read clean and confident but fail basic rigor checks, weak methods, unverifiable results, or shaky references. Polished writing is no longer proof of quality.

How do I avoid AI slop when using LLMs?

Use AI for speed, then enforce verification gates. Require method transparency, release data and code when possible, and run claim-to-evidence checks before submission. Treat the model as an accelerator, not an authority, and make auditability the new trust signal.

Is it okay to use AI for citations?

It can be, if you treat AI as a finder, not a source of truth. For AI citations, only keep references you personally opened or validated. Confirm DOI, title, and authors. If there’s no DOI, PDF, or primary link, do not cite it.

Can an AI peer review tool replace human reviewers?

No. AI peer review can help with triage, consistency checks, stats red flags, and checklist enforcement. A good AI peer review tool saves reviewers time by catching obvious issues early. But humans still have to judge novelty, causal claims, and whether conclusions match evidence.