Most model launches arrive with a fireworks show of adjectives and a suspiciously clean benchmark chart. Claude Opus 4.7 is more interesting than that. It is not the strongest model Anthropic has ever trained. That title, at least in the company’s own framing, still belongs to the limited-access Mythos Preview. But Claude Opus 4.7 is the model ordinary developers can actually touch, deploy, stress, and complain about at 2 a.m. after a bad prompt blows through their token budget.

That matters. A lot.

Because the real story of Claude Opus 4.7 is not just that it got better. It is where it got better. The model is sharper on hard software engineering, stronger on high-resolution visual work, more literal about instructions, and better at carrying long, messy tasks without drifting into the kind of fake confidence that makes demos look great and production logs look cursed. At the same time, it comes with a more expensive-feeling tokenizer, a new xhigh effort mode, and an awkward sibling rivalry with Mythos Preview that tells you quite a bit about where Anthropic thinks the danger line sits.

Table of Contents

1. Claude Opus 4.7 Benchmarks At A Glance

Before we get poetic, here is the scoreboard.

| Benchmark | Notes | Opus 4.7 | Opus 4.6 | GPT-5.4 | Gemini 3.1 Pro | Mythos Preview |

|---|---|---|---|---|---|---|

| SWE-bench Pro | Agentic coding | 64.3% | 53.4% | 57.7% | 54.2% | 77.8% |

| SWE-bench Verified | Agentic coding | 87.6% | 80.8% | n/a | 80.6% | 93.9% |

| Terminal-Bench 2.0 | Agentic terminal coding | 69.4% | 65.4% | 75.1% | 68.5% | 82.0% |

| Humanity’s Last Exam, no tools | Multidisciplinary reasoning | 46.9% | 40.0% | 42.7% | 44.4% | 56.8% |

| Humanity’s Last Exam, with tools | Multidisciplinary reasoning | 54.7% | 53.3% | 58.7% | 51.4% | 64.7% |

| BrowseComp | Agentic search | 79.3% | 83.7% | 89.3% | 85.9% | 86.9% |

| MCP-Atlas | Scaled tool use | 77.3% | 75.8% | 68.1% | 73.9% | n/a |

| OSWorld-Verified | Agentic computer use | 78.0% | 72.7% | 75.0% | n/a | 79.6% |

| Finance Agent v1.1 | Agentic financial analysis | 64.4% | 60.1% | 61.5% | 59.7% | n/a |

| CyberGym | Vulnerability reproduction | 73.1% | 73.8% | 66.3% | n/a | 83.1% |

| GPQA Diamond | Graduate-level reasoning | 94.2% | 91.3% | 94.4% | 94.3% | 94.6% |

| CharXiv Reasoning, no tools | Visual reasoning | 82.1% | 69.1% | n/a | n/a | 86.1% |

| CharXiv Reasoning, with tools | Visual reasoning | 91.0% | 84.7% | n/a | n/a | 93.2% |

| MMMLU | Multilingual Q&A | 91.5% | 91.1% | n/a | 92.6% | n/a |

The headline is not “wins everything.” It does not. The headline is that Claude Opus 4.7 makes its biggest gains in places developers actually feel: difficult coding, visual reasoning, computer use, and professional task quality. The jump from 53.4% to 64.3% on SWE-bench Pro is not cosmetic. That is a model moving from “promising assistant” toward “I might trust this with the annoying part of my afternoon.” The leap on CharXiv, from 69.1% to 82.1% without tools, is similarly revealing. Better vision is not just about looking at prettier images. It is about reading dense screenshots, design mocks, plots, and diagrams without turning every second pixel into fiction.

2. Claude Opus 4.7 Vs GPT-5.4, Where It Wins And Where It Absolutely Does Not

This comparison needs a little adult supervision.

If you cherry-pick SWE-bench Pro, Claude Opus 4.7 looks like the cleaner coding model. It beats GPT-5.4 there, 64.3% to 57.7%, and it also leads on MCP-Atlas and Finance Agent. That suggests a model that is unusually good at tool use, multi-step work, and the ugly middle of real tasks, where code, files, and context all have to stay coherent at once.

But if you cherry-pick Terminal-Bench 2.0 or BrowseComp, the picture changes. GPT-5.4 is ahead on both. So the honest reading is not that Claude Opus 4.7 has conquered the field. It is that Anthropic seems to have improved a specific performance profile: patient, instruction-tight, agentic coding and professional knowledge work. That is not the whole map. It is a very valuable part of the map.

This distinction matters because benchmarks love to collapse different kinds of intelligence into one leaderboard-shaped blur. Developers do not. They notice whether a model keeps its bearings after the sixth tool call. They notice whether it remembers the file it wrote 20 minutes ago. They notice whether “fix the bug, keep the tests, and explain the tradeoff” produces a fix, a regression, or a TED Talk.

By that standard, the most persuasive thing in the release material is not even a single metric. It is the recurring claim that the model can take instructions more literally, verify its own work, and handle long-running tasks with less supervision. That is exactly the sort of improvement that makes a model feel smarter than a one-digit benchmark delta suggests. It is also exactly the sort of improvement that breaks older prompts, because the old prompt accidentally relied on the model being fuzzy. Now it is not. Welcome to progress.

3. The Coding Leap, Why SWE-Bench Pro Matters More Than Hype

SWE-bench Pro is one of those tests that actually deserves attention, mostly because it is stubborn. Models cannot charm their way through it. They have to reason across codebases, change the right thing, and avoid detonating everything nearby.

That is why the 10.9-point jump from Opus 4.6 to Claude Opus 4.7 is the most important number in this launch. The model is also up to 87.6% on SWE-bench Verified, which makes the improvement look broad rather than accidental. If you have spent the last year watching models write impressive toy snippets and then trip over repository-scale reality, you can see why this gets people’s attention fast.

The interesting part is the texture of the improvement. Anthropic describes a model that is better at following instructions and better at checking its own outputs before declaring victory. That combination is catnip for serious coding work. A model that merely writes code is cheap entertainment. A model that writes code, reads the room, and notices when it might be wrong is much closer to a useful colleague. Still not a senior engineer. Let us stay calm. But closer.

This is also where SWE-bench Pro earns its place in the conversation. Software engineers are not just shopping for raw cleverness anymore. They are shopping for operational reliability. A model that gets slightly fewer points on a generalized reasoning exam but significantly more points on an agentic coding benchmark can be the better model for a team’s actual week.

4. Claude Opus 4.7 Token Limits, The Part Everyone Will Argue About

Now for the complaint section, because every model upgrade arrives with one, and this time the complaint is easy to understand.

4.1. The Tokenizer, xhigh, And Why Usage Suddenly Feels Different

Anthropic says Claude Opus 4.7 uses an updated tokenizer, and that the same input may map to roughly 1.0 to 1.35 times more tokens depending on content type. It also says the model thinks more at higher effort levels, especially later in agentic runs. Translation, your prompt may cost more than you expect, and your long sessions may swell even when the model is doing useful work.

That does not automatically mean the model is less efficient. In fact, Anthropic argues the net effect was favorable on an internal coding evaluation. But from a user’s point of view, the emotional reality is simpler. You hit a limit sooner, you do not care that the tokenizer got philosophically better. You care that your session just died halfway through refactoring auth.

Here is the practical version.

| Problem | Why It Happens | What To Do |

|---|---|---|

| Input cost feels higher | Updated tokenizer can map the same text to more tokens | Shorten boilerplate, trim logs, dedupe pasted context |

| Long runs get expensive | Higher effort levels generate more thinking and output | Start with high, move to xhigh only when the task earns it |

| Agentic loops balloon | Later turns in long runs accumulate reasoning overhead | Set task budgets and break work into bounded phases |

| Responses ramble | Model is trying to be thorough | Prompt for concise output, explicit checklists, tight deliverables |

| Migration feels weird | Old prompts assumed looser instruction-following | Re-tune prompts and harnesses, do not just swap model IDs |

This is why the new task budgets matter. They are not marketing garnish. They are cost-control for people who want the model’s patience without donating a kidney to the token counter. And this is why the new xhigh effort mode is a mixed blessing. Great for hard problems, not something you should spray at every task like room freshener.

5. Claude Opus 4.7 And Claude Mythos Preview, The Awkward Family Photo

Anthropic’s own documents make something very clear. Claude Opus 4.7 is not the company’s frontier model. Mythos Preview is stronger on every relevant axis they measured, and Opus 4.7 does not advance the capability frontier. That is unusually candid, and honestly refreshing. Most companies would hide that sentence in a cave. Anthropic put it in the paperwork.

The reason this matters is cybersecurity. Anthropic explicitly says it kept Mythos Preview limited and used Claude Opus 4.7 as the first generally available model to deploy new cyber safeguards on a less capable system. It even says the company experimented during training with reducing Opus 4.7’s cyber capabilities relative to Mythos. That is not a rumor. That is the release story.

So yes, there is a kind of nerf here, if you want to use the internet’s favorite word. But it is not random product vandalism. It is policy made visible. Anthropic appears to be drawing a line between the model it is willing to hand the broader market and the model it still wants behind velvet rope and supervision.

From a technical perspective, that split is fascinating. From a product perspective, it is mildly frustrating. From a safety perspective, it is probably inevitable.

6. Claude Code Ultrareview, Auto Mode, And The New Developer Ergonomics

The most underrated part of this launch may be the tooling around the model.

Anthropic added a new xhigh effort level, task budgets in the API, a new /ultrareview command in Claude Code, and wider access to auto mode for Max users. On paper, that sounds like feature salad. In practice, it tells a coherent story. The company thinks people are using Claude Opus 4.7 for longer, more autonomous workflows, and it is trying to give them better knobs rather than pretending one default fits every situation.

The slash command is especially telling. A dedicated review session that reads changes and flags bugs or design issues is exactly what you build when you know developers are no longer just asking for snippets. They are asking for a second set of eyes. Maybe a third. Preferably one that does not book meetings.

Auto mode points in the same direction. It is basically an attempt to make long-running tasks less interrupt-heavy without pushing users into the far riskier territory of “skip all permissions and pray.” That is good product sense. Real agentic work dies from friction long before it dies from philosophy.

7. Vision, Memory, And Real Work, The Improvements People Will Notice Without Reading A Single Benchmark

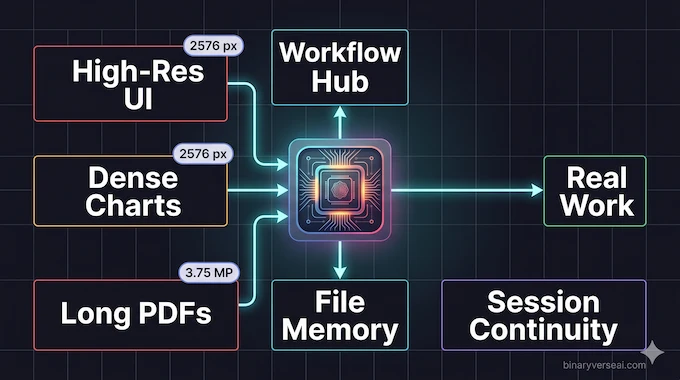

One of the easiest mistakes in model analysis is to treat “better vision” like a side quest. It is not. Claude Opus 4.7 now accepts images up to 2,576 pixels on the long edge, about 3.75 megapixels, more than three times prior Claude models. That makes a practical difference in UI inspection, chart reading, document extraction, and any task where detail density matters. It also tracks with the CharXiv jump, which is the kind of benchmark improvement that usually signals a real change in visual reasoning rather than cosmetic tuning.

Then there is memory. Anthropic says the model is better at file system-based memory across long, multi-session work. That sounds boring until you have watched a model lose the plot for the fourth time in one afternoon. Continuity is a hidden tax on all AI workflows. Every reminder, every re-upload, every “as I said earlier” is a little productivity leak. Better memory is not glamorous, but it compounds.

The same goes for professional tasks. Finance Agent, GDPval-AA, stronger presentations, tighter cross-task integration, these are all signals that the model is not just getting better at STEM contest questions. It is getting better at the stuff that fills actual calendars. As Claude AI notes, this reflects a broader push toward models that perform across real-world professional workflows, not just curated evaluations.

8. Safety, Alignment, And The Fine Print You Ignore At Your Own Expense

The safety story is more nuanced than “safer” or “less safe.”

Anthropic says Claude Opus 4.7 broadly matches the safety profile of Opus 4.6, while improving on honesty, hallucination rates, prompt-injection resistance, and some forms of malicious agentic misuse. At the same time, it notes a modest weakness in overly detailed harm-reduction advice on controlled substances, and it is still generally weaker than Mythos Preview on many alignment measures. That is not contradictory. It is what real model evaluation looks like, messy, domain-specific, and resistant to bumper stickers.

One result worth noticing is the browser-use prompt injection benchmark, where deployed safeguards reduced successful attacks against Opus 4.7 to zero in the tested environments, matching Mythos Preview. That is a strong outcome, even with the usual caveat that benchmarks are not the internet and attackers do not politely reuse the same tactics forever. Still, it is the kind of result that suggests Anthropic is getting more serious about agentic safety at the exact moment agentic usage is becoming product-default behavior.

9. Pricing, Availability, And Whether You Should Actually Switch

The good news is that pricing stayed put. Claude Opus 4.7 is listed at $5 per million input tokens and $25 per million output tokens, with availability across Claude, the API, Vertex AI, Microsoft Foundry, and Bedrock, where Anthropic notes research preview status on AWS for this model. The context window is 1 million tokens, max output is 128k in the synchronous Messages API, and up to 300k output tokens are available in Message Batches with the relevant beta header. Mythos Preview remains invite-only. For a full breakdown of how this stacks up across providers, see our LLM pricing comparison.

So should you migrate?

- Yes, if your workflow is hard coding, multi-step tool use, high-resolution visual input, or long professional tasks where instruction fidelity matters more than benchmark omnipotence.

- Not blindly, if your current setup is finely tuned, cost-sensitive, or heavily dependent on prompt patterns that assumed the model would interpret things loosely. In that case, treat migration like a real migration. Run traffic. Measure token drift. Re-tune prompts. Then switch.

That is the adult answer. Less dramatic, more useful.

10. Final Verdict, The Model To Watch Is The One You Can Actually Use

The cleanest way to describe Claude Opus 4.7 is this: it is the most convincing generally available Anthropic model so far, not because it wins every chart, but because its improvements line up unusually well with real work.

It codes better where it counts. It sees more. It follows instructions more literally. It remembers more across sessions. It gives developers better controls over effort, reviews, and long runs. It also asks you to pay attention, to tokens, to harness design, to safety boundaries, and to the uncomfortable fact that the strongest model in the family is still sitting in a separate room under closer guard.

That is why this release feels important. It is not a victory lap. It is a productization moment.

If you build with models for a living, do not just read about Claude Opus 4.7. Put it on a repo that has hurt your feelings before. Hand it a dense screenshot. Give it a bug report written by an exhausted human. Then watch what happens. That is where the truth is.

Why am I hitting my Claude Opus 4.7 usage limits so fast?

Because Claude Opus 4.7 uses a new tokenizer that can map the same text to about 1.0x to 1.35x more tokens than Opus 4.6, and higher effort settings can make the model spend more tokens across thinking, tool calls, and long agentic traces. High-resolution images can increase usage too.

What is the difference between Claude Opus 4.7 and Claude Mythos Preview?

Claude Opus 4.7 is Anthropic’s most capable generally available model, while Claude Mythos Preview is the stronger model that remains limited-access. Anthropic says Mythos scored higher on the relevant evaluations, and it also says Opus 4.7 was the first general-access model used to test new cyber safeguards after training efforts that reduced some cyber capabilities relative to Mythos.

How much better is Claude Opus 4.7 at coding than Opus 4.6?

On the benchmark table provided in the system card materials, Claude Opus 4.7 rises from 53.4% to 64.3% on SWE-bench Pro and from 80.8% to 87.6% on SWE-bench Verified. That is the clearest sign that Anthropic pushed hardest on difficult, agentic software engineering work.

Does Claude Opus 4.7 have better vision capabilities?

Yes. Claude Opus 4.7 is Anthropic’s first Claude model with high-resolution image support up to 2576px or 3.75MP, up from the prior 1568px or 1.15MP limit. That makes it better suited for dense screenshots, charts, diagrams, and UI-heavy workflows.

What is the new /ultrareview feature in Claude Code?

/ultrareview is a Claude Code command for comprehensive cloud-based code review using parallel multi-agent analysis. You can run it on your current branch with no arguments, or point it at a specific GitHub PR with /ultrareview <PR#>. Anthropic also paired this rollout with easier auto mode access.