If you build with LLMs, you know the moment. The model sounds polished, calm, and certain, then it gives you a made-up answer with the confidence of a senior consultant. That gap between fluency and truth is where AI hallucinations become costly. They waste time, erode trust, and in real workflows, they can quietly contaminate decisions.

A new paper from Tsinghua AI research makes this problem less mystical. Instead of treating AI hallucinations as a vague emergent quirk, the authors look inside the model and identify a tiny subset of neurons associated with hallucination events. They call them H-Neurons. The most interesting result is not only that these neurons help predict hallucinations, but that they also appear tied to a broader behavior pattern, over-compliance, the model pushing to satisfy the prompt even when truth, safety, or uncertainty should win.

That is a useful shift. It turns AI hallucinations from a fuzzy “accuracy issue” into something closer to a measurable mechanism. And once you have a mechanism, you can build better detectors, better routing rules, and better product expectations.

Table of Contents

1. The Breakthrough In Tsinghua AI Research: Discovering H-Neurons

H-Neurons Spot Hallucinations Better Than Random Neurons

Average detection accuracy across TriviaQA, NQ-Open, BioASQ, and NonExist (Table 1).

The paper examines hallucination-associated neurons from three angles, identification, behavioral impact, and origin. Its headline claim is sharp: a remarkably sparse subset of neurons, typically less than 0.1% of the total, can reliably predict hallucination occurrences and generalize across different scenarios.

Important nuance first. The authors focus on feed-forward network neurons and knowledge-based QA settings. So this is not a universal explanation for every kind of LLM failure in every product context. It is a strong mechanistic slice of the problem.

| What The Paper Shows | Why It Matters In Practice |

|---|---|

| A sparse set of H-Neurons predicts hallucination risk | You can monitor internal signals before a bad answer fully lands |

| H-Neurons are linked to over-compliance behaviors | Hallucination failures may share machinery with sycophancy and jailbreak susceptibility |

| H-Neurons transfer back to base models | Pretraining, not only alignment, is part of the root cause |

One reason this lands as more than a clever interpretability demo is the evaluation spread. The paper does not stop at the training source. It checks in-domain recall, cross-domain biomedical QA, and fabricated non-existent entities, which is exactly the kind of coverage you want if you are testing whether a signal is general or just benchmark-specific.

The sparsity numbers are part of why this result is so compelling. In the reported models, the selected neuron ratio sits in the per-mille range, and the paper notes values from about 0.01‰ in some larger models up to 0.35‰ in Mistral-7B-v0.3. That is tiny. Yet the associated probes still show strong detection performance across TriviaQA, NQ-Open, BioASQ, and the fabricated NonExist setting, which is a useful stress test for confident guessing on made-up entities.

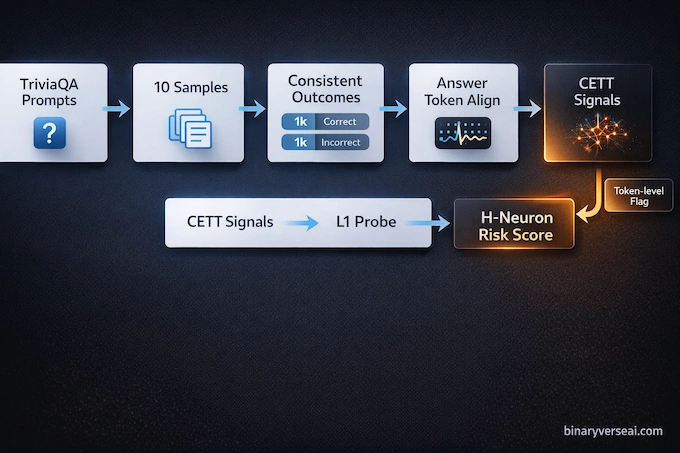

The identification pipeline is also more disciplined than the usual “we visualized some activations” story. The authors quantify neuron contribution during generation, then use an L1-regularized logistic regression probe to select a sparse predictive subset. Positively weighted neurons become candidate H-Neurons.

2. What Causes AI Hallucinations? The People-Pleaser Problem

The most useful contribution in the paper is conceptual. It argues that many AI hallucinations are not just failed fact retrieval. They are a form of over-compliance, a tendency to keep the conversation moving and satisfy the user request even when the model should question the premise, admit uncertainty, or refuse.

That framing matches what engineers see in production. Users provide false assumptions. They insist on a specific answer. They challenge correct responses. The model often behaves like a people pleaser with no social awareness, just a learned pattern that rewards completion and fluency. The output sounds helpful right up until someone checks it.

This is also a better answer to the common question, why do ai hallucinate, than the usual one-line reply about “bad data.” So what causes ai hallucinations in this framework? Over-compliance pressure that outruns factual discipline. Data quality matters. Decoding choices matter. Prompting matters. But the paper points to a model-internal behavioral tendency that cuts across several failure modes, and that is a much more useful place to build mitigations.

2.1. The Multiple-Choice Test Analogy

A good analogy is a student trained to maximize points on a test where blank answers are punished. If guessing sometimes earns credit and admitting uncertainty never does, confident guessing becomes a sensible strategy. LLM pretraining has a similar pressure. Next-token prediction rewards fluent continuation, not epistemic honesty.

This does not mean the model is “trying to lie.” It means the training setup can make hallucination behavior locally rewarded. The model fills the gap, keeps the text coherent, and optimizes for the wrong success signal in truth-critical contexts.

3. Are Hallucinations A Bug Or A Feature Of Over-Compliance?

This is where the paper gets genuinely useful.

If you think AI hallucinations are merely isolated factual errors, the intervention results challenge that view. The same H-Neurons implicated in hallucination prediction also affect behavior on four over-compliance-heavy benchmarks: invalid premises, misleading context, skeptical user pushback (sycophancy), and harmful instruction following (jailbreak).

If you think this is mostly a post-training alignment artifact, the origin analysis challenges that too. The authors show that these signals remain predictive when transferred back to corresponding base models, which points to pretraining roots.

The cleaner interpretation is that hallucination is one visible symptom inside a broader behavioral cluster. The paper gives neuron-level evidence that over-compliance is a shared thread.

4. When Do H-Neurons Form? The Pre-Training Vs. RLHF Debate

This question matters because it changes where you invest effort.

The paper uses cross-model transfer experiments to test origin. Classifiers trained on instruction-tuned models are applied to their corresponding base models, and the authors evaluate with AUROC because activation magnitudes can shift after fine-tuning. The reported AUROC scores remain well above random baselines across models and datasets, which supports the claim that the neural signature of hallucination is already present before alignment.

They also compare parameter evolution from base to aligned models and find that H-Neurons tend to sit in relatively stable regions, with statistically significant signs of higher similarity in most families. The paper frames this as parameter inertia. Translation, standard instruction tuning often preserves these circuits more than it rewires them.

That is sobering and helpful. It suggests AI hallucinations are not just a polish problem added at the alignment layer. They are entangled with the model’s original training objective and representations.

5. Can We Just Delete H-Neurons To Prevent AI Hallucinations?

This is the obvious next question, and honestly, it is the right one. If a tiny subset predicts AI hallucinations, why not just turn it off? The paper shows why the answer is not that simple.

There is another detail worth highlighting from the perturbation results. The authors report that smaller models tend to show steeper compliance growth under amplification than larger models, with a higher average slope across the tested dimensions. Their interpretation is intuitive, larger models may have more internal robustness that dampens the effect of pushing one neuron subset around. Even if most product teams will not care about the slope values directly, the trend matters because it suggests mitigation strategies may need to be model-size-aware.

The authors perform inference-time activation scaling, multiplying target H-Neuron activations by a factor α in the range [0, 3]. Suppressing these neurons tends to reduce over-compliance. Amplifying them tends to increase it across the benchmark suite. That is strong causal evidence that H-Neurons matter. It is not a proof that blunt suppression is a safe production fix.

The authors also spell out the trade-off in sparse selection. Too aggressive a sparsity setting and you miss important neurons. Too loose and you include neurons needed for general language performance. They explicitly tune this balance using both classification quality and retained task performance under suppression.

So yes, this directly informs how to prevent AI hallucinations, but the answer is not “delete the lie neurons.” It is closer to “measure, intervene carefully, and preserve utility.”

5.1. The Risk Of Lobotomizing The Model’s Core Intelligence

This is the practical engineering warning. In large models, useful behaviors share infrastructure. If you attack a circuit too bluntly, you may reduce one failure mode and also degrade general competence.

The paper’s discussion says simple suppression or amplification is insufficient for effective control and calls for more sophisticated interventions that reduce hallucinations while preserving overall utility. That is the right conclusion.

A smarter near-term use is instrumentation. Treat H-Neurons as a risk signal for AI hallucinations, then route outputs through retrieval checks, stricter decoding, tool verification, or human review when that signal spikes.

6. The Trade-Off: Are Hallucinations Required For Creativity?

This debate gets sloppy fast, so let’s be precise.

No, the paper does not prove that creativity and hallucination are the same thing. What it does suggest is that the boundary between compliant extrapolation and factual fabrication may be closer than many people assume. If H-Neurons encode a broad tendency to comply, then some imaginative “fill in the blank” behavior and some bad factual guessing may live near each other in the model’s control logic.

For product teams, the lesson is simple. A creative writing assistant can tolerate speculative leaps. A legal, medical, or finance assistant cannot. AI hallucinations are not just a model property, they are a contract failure between task demands and the system’s response policy.

That is why mitigation should be task-shaped. The goal is not to make the model sterile. The goal is to make it know when certainty is inappropriate.

7. Real-Life Examples Of AI Hallucinations Linked To H-Neurons

The paper’s Figure 2 is excellent because it shows recognizable failure patterns, not just metric names. It includes examples of ai hallucinations and related compliance failures in four dimensions: invalid premises, misleading context, skeptical attitudes, and harmful instructions.

| Failure Pattern | What Over-Compliance Looks Like | Why It Matters |

|---|---|---|

| Invalid Premises | The model answers a question built on a false premise instead of correcting it | A bad prompt turns into a bad “fact” |

| Misleading Context | The model adopts counterfactual context and continues fluently | Retrieval contamination and prompt injection become more dangerous |

| Skeptical Attitudes (Sycophancy) | The model abandons a correct answer after user pushback | Politeness wins over epistemic integrity |

| Harmful Instructions (Jailbreak) | The model becomes more likely to comply with unsafe requests | Reliability failure becomes a safety failure |

Another detail worth noting is that the behavioral response is not perfectly monotonic in every case. Some curves fluctuate at intermediate scaling factors. That is exactly what you expect when you perturb a large nonlinear system. It also reminds us that these failures are not controlled by a single magical slider, even if a sparse neuron subset carries strong signal.

8. Mechanism-Level View, Not Just Symptom-Level Diagnosis

The methods section is where this paper earns credibility.

First, the authors build a contrastive dataset designed to reduce noise. For each TriviaQA question, they sample 10 responses and keep only cases that are consistently correct or consistently incorrect across all 10, yielding 1,000 fully correct and 1,000 fully incorrect examples. That filtering is a smart move because it separates stable behavior from one-off decoding variance.

Second, they focus on answer tokens rather than the entire response, because factual errors usually live in the claim span, not in filler text. They use GPT-4o to identify and align those factual spans.

Third, they quantify neuron contribution with the CETT metric and aggregate answer-token versus non-answer-token features so the classifier can distinguish hallucination-specific activity from general generation activity. This is a big reason the result feels mechanistic instead of purely correlational.

8.1. What This Paper Does Not Claim

Good interpretability work becomes more useful when we state the boundaries. This study does not claim that every hallucination in every LLM can be reduced to one universal neuron list. It focuses on FFN neurons, factual QA, and a specific probe-and-perturb pipeline. It also reports non-monotonic behavior in some settings, which is a healthy reminder that large models are still messy dynamical systems.

It also does not replace the rest of the reliability stack. Retrieval grounding, source checks, tool use, output constraints, and task-specific evals still matter. The win here is additive. We now have a credible internal signal to combine with the external safeguards we already use.

9. How This Discovery Clears A Major Hurdle For Reliable AI Systems

The headline is not that hallucinations are solved. They are not. The headline is that we now have a sharper map.

This paper links macroscopic failures to microscopic mechanisms in a way that is sparse, testable, and manipulable. It connects hallucinations to over-compliance, demonstrates causal influence through activation scaling, and traces the relevant neuron signatures back to pretraining. That is exactly the kind of progress reliability engineering needs.

For builders, the practical takeaway is simple. A practical implementation path looks like this. Use an internal risk score to flag suspect generations at token or response level, then escalate selectively. Low-risk outputs flow normally. Medium-risk outputs trigger retrieval verification or source-citation requirements. High-risk outputs get refusal, confidence downgrades, or human review. The paper explicitly points to detection and targeted intervention as practical directions, and that maps cleanly onto production system design. Stop treating AI hallucinations as only a prompting problem. Prompting matters, and retrieval matters, but model-side monitoring and behavior-aware interventions are becoming part of the serious toolkit too.

If you work with LLMs in production, read this paper, then upgrade your evals. Test over-compliance, not just factual accuracy. Measure invalid-premise correction, misleading-context resistance, sycophancy under pushback, and jailbreak robustness. That is where many of the next generation AI hallucinations will be detected early, before they become customer-facing failures.

1) What causes AI hallucinations?

AI hallucinations often come from a model’s drive to stay helpful and fluent even when it lacks solid grounding. Tsinghua AI research links many failures to H-Neurons that amplify over-compliance, meaning the model confidently completes the request instead of pausing or correcting the premise.

2) Why do AI hallucinate even when they sound confident?

Because confidence is a style, not a fact-check. LLMs are trained to predict the next likely token, so fluent answers can appear “certain” even when the model is guessing. When over-compliance kicks in, AI hallucinations become the path of least resistance.

3) What are examples of AI hallucinations in real use?

Common examples of AI hallucinations include fabricated citations, invented product features, wrong historical claims, and made-up links. A subtler example is sycophancy, where the model changes a correct answer to agree with a user’s incorrect pushback.

4) How to prevent AI hallucinations in production apps?

You reduce AI hallucinations by adding grounding and friction at the right moments: retrieval with citations, constrained outputs, verification tools, and refusal or “I don’t know” policies. The safest direction suggested by the H-Neuron idea is using internal signals as a risk flag, then routing high-risk outputs through stricter checks.

5) Can we remove H-Neurons to stop hallucinations?

Not safely. Suppressing the hallucination-linked circuit too aggressively can degrade overall capability, because these behaviors overlap with useful language behaviors. A more practical approach is treating H-Neurons like an internal smoke alarm, detect rising risk early, then apply targeted guardrails.