Introduction

Fine-tuning an LLM feels like doing surgery with oven mitts. You make one clean cut, the patient learns a shiny new skill, then you check the vitals and realize it forgot its own name.

That is the default behavior of supervised fine-tuning in a sequential world. You ship a new capability this week, your users ask for a different capability next week, and your model starts trading old skills for new ones.

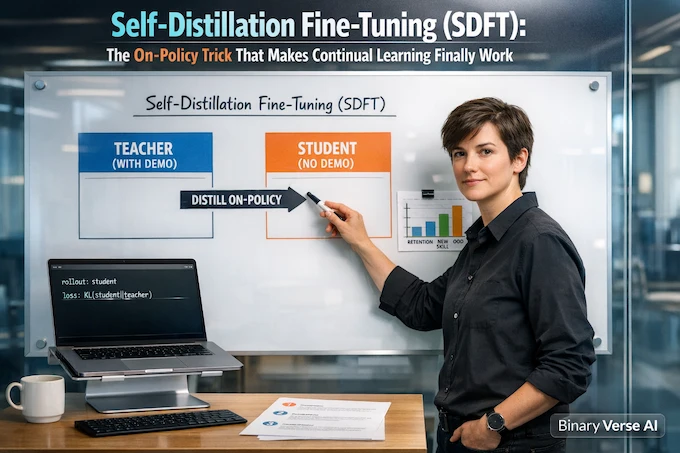

The paper Self-Distillation Enables Continual Learning argues for a simple fix: if you want continual learning, the update has to be on-policy. The model must train on the trajectories it will actually produce after it updates, not only on pristine expert demonstrations. Their method, Self-Distillation Fine-Tuning (SDFT), gets you there without reward engineering by using demonstrations as temporary “privileged context.”

Let’s unpack the idea, why it works, and when it beats your current llm fine tuning setup.

Table of Contents

1. SDFT In One Paragraph (TLDR)

Self-Distillation turns a demo dataset into an on-policy learning signal. You run the same model in two roles on the same prompt. The student sees only the user query. The teacher is the same model, but it also sees a relevant demonstration, so it produces a better next-token distribution while staying anchored to the base behavior. Then you generate from the student, step by step, and train the student to match the teacher on that exact student trajectory. That is on-policy distillation in plain English.

If you do sequential updates, build tool-using agents, or maintain a continual learning ai system that cannot afford catastrophic forgetting, Self-Distillation is worth a serious look.

Self-Distillation SDFT Decision Guide

A quick, mobile-friendly reference for choosing when Self-Distillation Fine-Tuning (SDFT) helps most.

| Decision You’re Making | What Usually Goes Wrong | What SDFT Changes | Quick “Use This When…” |

|---|---|---|---|

| You have demos and want a new skill | Off-policy SFT trains on expert paths only, then errors compound | Self-Distillation corrects the student on its own trajectories | Agents, tool-use, multi-step tasks |

| You need new facts in weights | SFT memorizes answers but does not internalize facts well | Teacher is conditioned on text plus worked answer, distilled on-policy | Private corpora, offline environments |

| You fear regression | Sequential updates overwrite earlier abilities | Self-Distillation stays closer to base behavior | Continual learning machine learning pipelines |

| You are choosing weights vs retrieval | RAG is safe, but not always possible | Weight update with better generalization than SFT | Privacy, latency, or offline needs |

2. The Core Problem: Why Fine-Tuning Causes Catastrophic Forgetting

Catastrophic forgetting is the boring failure mode with the scariest name. You fine-tune on Task B, and Task A degrades. Fine-tune again on Task C, and now A and B wobble.

The paper’s framing is crisp: supervised fine-tuning is off-policy. You train on trajectories produced by an expert demonstrator, but at inference your model produces its own trajectories. Small mistakes move it off the demonstration distribution, and then mistakes compound.

If you are asking “how does llm fine tuning work” once you ship to users, this is the answer. It works until the model’s own behavior becomes the distribution.

3. What’s New: Self-Distillation, Built For Continual Learning

Traditional distillation is “big teacher, small student, compress knowledge.” Self-Distillation flips it. The teacher and student are the same base model, and the extra competence comes from context.

The teacher gets privileged information: a demonstration chosen for that specific prompt. This is not a fixed system prompt you distill once. It is instance-wise conditioning that expresses task intent at fine granularity.

That one design choice is why SDFT behaves like a continual learning method instead of a prompt compression trick.

4. How SDFT Works Step By Step

4.1 Build A Teacher From Demonstrations

The teacher prompt is refreshingly simple: show the question, show an example response, then ask the model to answer with its own response, including its thinking process.

That creates the teacher policy π(·|x, c), the model conditioned on the demonstration c, while the student is the unconditioned model πθ(·|x).

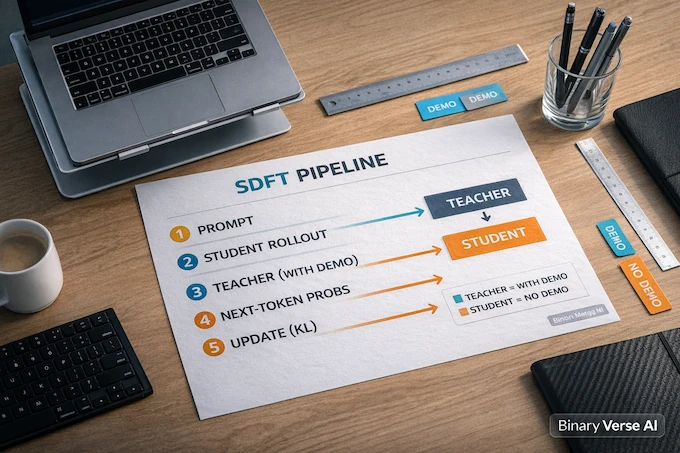

4.2 Generate From The Student, Score With The Teacher

You do not sample answers from the teacher and train offline. You sample from the student. For each prefix the student created, you ask the teacher what it would do next. Training minimizes the reverse KL from student to teacher along the student’s own sequence, yielding on-policy updates.

4.3 Why The Teacher Does Not Bulldoze The Model

On ToolAlpaca, the base model solves only 42% of examples, while the demo-conditioned teacher reaches 100% success.

At the same time, the teacher stays closer to the base policy than an SFT model does. They measure KL divergence to the base policy and report 1.26 nats for the SFT model versus 0.68 nats for the teacher.

That closeness is the safety rail. It is why Self-Distillation can learn without trashing prior capabilities.

5. The Key Insight: On-Policy Beats Off-Policy When Mistakes Compound

Off-policy imitation learning teaches the model to behave well in a world where it never makes mistakes. Real models do not live in that world.

On-policy learning trains under the model’s own state distribution, which avoids the compounding-error failure mode of off-policy imitation.

In LLM terms, Self-Distillation is “practice your own messy attempts, then get corrected by a wiser, demonstration-aware version of you.”

6. Results That Matter: The Wins Are Not Subtle

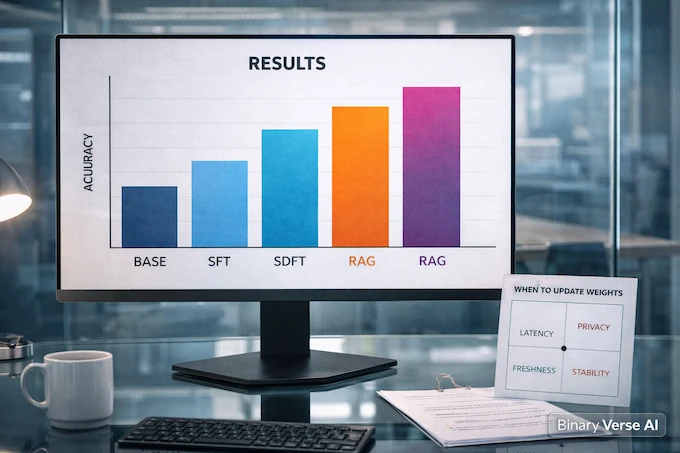

The paper evaluates two settings that map cleanly to production: skill learning and knowledge acquisition.

6.1 Skill Learning And Sequential Updates

They test Science Q&A, Tool Use, and Medical reasoning.

They also run a sequential learning experiment across three distinct skills, where a single model acquires each skill in turn while preserving performance on previously learned skills and on unrelated general benchmarks.

6.2 Knowledge Acquisition: Updating Weights With New Facts

They build a corpus of Wikipedia articles about natural disasters in 2025, after the training knowledge cutoff, totaling about 200K tokens, then generate QA pairs for training.

Self-Distillation Accuracy Comparison

Strict, lenient, and OOD accuracy outcomes for Self-Distillation (SDFT) versus baselines.

| Method | Strict Accuracy | Lenient Accuracy | OOD Accuracy |

|---|---|---|---|

| Base Model | |||

| SFT | |||

| SDFT (Ours) | |||

| Oracle RAG |

SFT improves a lot, but Self-Distillation improves more, and it nearly closes the out-of-distribution gap to an oracle RAG system.

Ablations add a practical note: conditioning the teacher on full text plus answer yields much better strict accuracy than weaker contexts.

7. Reasoning Without Reasoning Traces, And Why That Matters

Reasoning post-training often runs into a data problem. Many datasets contain only final answers. Naively applying SFT on answer-only data can collapse a reasoning-capable model’s long chain-of-thought behavior.

They test this with Olmo-3-7B-Think on a medical dataset with no explicit reasoning traces. Standard SFT drops accuracy from 31.2% to 23.5% and shortens responses from 4612 tokens to 3273. With Self-Distillation, accuracy rises to 43.7% and response length stays much closer to the original, around 4180 tokens.

If you are doing llm fine-tuning on imperfect datasets, this is a strong signal that you can adapt without training the model to “think less.”

8. SDFT Vs SFT Vs RL, A Working Mental Model

SFT is easy and fast, and it is off-policy. RL is on-policy and powerful, and it needs rewards.

Self-Distillation sits in the demo-only middle. It gives you an on-policy update without writing a reward function, by comparing student behavior to a demonstration-aware teacher.

So it does not replace RL. It complements it. If you have both demos and rewards, SDFT is a strong initializer because your policy is already trained under its own trajectories.

9. SDFT Vs RAG: The “LLM Fine Tuning Vs RAG” Decision

Treat “llm fine tuning vs rag” as a constraint question, not a purity test.

Use RAG for volatile facts and strong grounding. Update weights when latency matters, when you need offline behavior, when privacy rules out retrieval, or when the target is a durable skill.

Self-Distillation is a weight update that tends to generalize better than plain SFT in sequential settings. If you are collecting llm fine tuning techniques, this one directly targets the deployment distribution mismatch. Also, yes, I am intentionally using both spellings, llm fine tuning and llm fine-tuning, because the internet insists on it.

10. Practical Considerations: Compute, Scaling, Failure Modes

On-policy learning adds generation to your training loop. In their setup, they use a single on-policy rollout per training example with an analytic per-token KL gradient estimator for stability.

A few grounded takeaways:

- Scale helps because the teacher signal depends on in-context learning quality.

- Teacher stability matters. A frozen base teacher underperforms, and using the current student as teacher can be unstable. An EMA teacher is a good compromise.

- Context quality is not optional for knowledge acquisition.

If you want to implement SDFT without turning your training pipeline into a science project, think in terms of three loops:

- Rollout loop. For each training prompt, sample exactly one student response (greedy for stability or temperature for pass@k style work, the paper reports both settings in evaluation).

- Teacher scoring loop. Replay that student response token by token, and query the demo-conditioned teacher for next-token probabilities on the same prefixes.

- Update loop. Backprop the KL-based loss that pulls the student distribution toward the teacher along the student trajectory.

The operational trick is to log everything the first time: student outputs, teacher probabilities, KL curves, and a small “prior skills” benchmark you never touch. When training goes sideways, those artifacts tell you whether you have a bad teacher context, a decoding issue, or a real capacity limit. You can fix the first two in an afternoon. The last one is when you upgrade the model or rethink the task.

11. Common Objections, Answered Quickly

11.1 “Isn’t This Cheating With Privileged Info?”

It is training-time scaffolding. The student does not need the demonstration at inference time. The point of Self-Distillation is to compress that scaffolding into weights.

11.2 “Is This Just Distillation Rebranded?”

The distillation part is familiar. The on-policy part is the difference. Here the student is trained under its own trajectory distribution, and the context is instance-specific.

11.3 “Does This Scale?”

Their scaling experiment suggests gains grow with model size, which matches the intuition that stronger in-context learning yields a better teacher.

12. Takeaways, And A Concrete Next Step

If your sequential fine-tuning keeps causing catastrophic forgetting, you are probably fighting an off-policy problem, not a learning-rate problem.

If you have demonstrations, Self-Distillation Fine-Tuning (SDFT) is a clean on-policy update without reward engineering.

If you are updating a reasoning model on answer-only data, Self-Distillation can preserve reasoning depth instead of training it away.

Now the CTA: try it on something small but real. Pick two skills your model needs in production, run a baseline SFT, then do a sequential update and measure regression. Repeat with Self-Distillation. Keep the evaluation suite fixed and boring.

Then publish your results. The authors even share code and datasets, which means the only real blocker left is curiosity.

Bonus: if you are testing self-distillation fine-tuning in your stack, tell people what broke. That is how continual learning stops being a slogan and becomes a tool.

1) What is self distillation?

Self-distillation is when a model learns from targets produced by itself, or a stronger version of itself, instead of a separate teacher. In SDFT, the “teacher” is the same model with a demonstration in the prompt, and the “student” is the same model without the demo.

2) Does knowledge distillation really work?

Yes, when the teacher provides useful signal beyond hard labels. It can improve stability and generalization. In SDFT, the win is not compression, it’s behavior-aligned learning: the training signal stays on-policy, so the model learns to recover from its own errors instead of only imitating perfect trajectories.

3) What are two types of distillation?

A practical split:

Teacher–student distillation (classic): a stronger or larger teacher trains a smaller student.

Self-distillation: the model teaches itself, often using an EMA teacher, multi-stage training, or a “privileged context” teacher like SDFT.

4) Is AI distillation legal?

It depends on licensing and data rights. If you distill outputs from a third-party model or API, you must check the model’s license, the provider’s terms, dataset provenance, and whether the outputs can be reused to train a redistributable model. When in doubt, treat it like compliance work, not a hack.

5) How do I add new knowledge to an LLM?

Two main routes:

RAG / retrieval: add knowledge to an index and retrieve it at inference. Fast, safer, and doesn’t change weights.

Fine-tuning (including SDFT): update weights so skills and knowledge stick internally, but you must manage catastrophic forgetting. SDFT is designed to make that continual learning loop behave.