The first thing to understand about Project Maven is that it was never just “AI for war” in the Hollywood sense. No glowing red robot eye. No machine calmly deciding the fate of cities. The more unsettling reality is much more ordinary: a giant data pipeline, trained models, sensor feeds, maps, alerts, rankings, dashboards, and tired humans trying to make decisions faster than humans were built to make them.

That is why the Iran reporting matters. Recent reports from The Washington Post and CBS News say the U.S. military used Palantir’s Maven Smart System and Anthropic’s Claude during Iran-related operations. The claim is not that Claude woke up one morning and became a general. The claim is that modern war is being reorganized around AI-assisted decision support, where machines compress time, rank options, summarize intelligence, and make the fog of war look deceptively clickable.

Table of Contents

1. What Project Maven Actually Is

| Term | Plain-English Meaning | Why It Matters |

|---|---|---|

| Project Maven | A U.S. military AI program launched to process huge volumes of imagery and intelligence data | It is the foundation of the story, not a side detail |

| Palantir Maven | Palantir’s Maven Smart System, the platform now tied to AI-enabled military workflows | It connects battlefield data, software, and command decisions |

| Claude | Anthropic’s large language model family | Reports say it helped with analysis, summaries, and decision support |

| Targeting Workflow | The chain from raw intelligence to possible target review and strike assessment | This is where AI can speed up decisions and increase risk |

| Human Oversight | The claim that people still make final lethal decisions | The most important legal and ethical question in the whole debate |

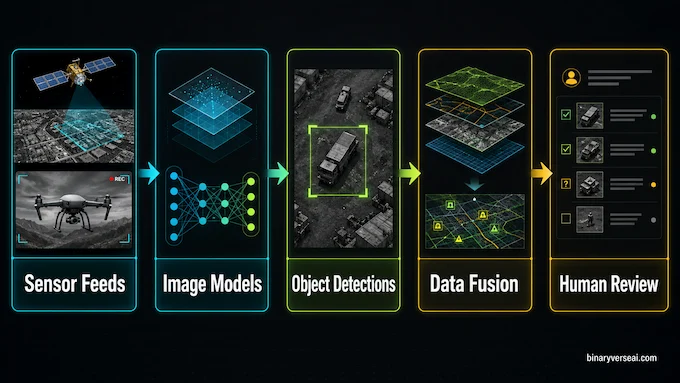

If someone asks, what is Project Maven, the short answer is this: it is the Pentagon’s flagship effort to bring machine learning into military intelligence workflows. The longer answer is more interesting. It began as a way to help analysts sift through impossible amounts of imagery and video. The National Geospatial-Intelligence Agency says Maven uses computer vision and AI to detect, identify, characterize, extract, and attribute objects and features in imagery and video, then feed those detections into broader military systems.

That sounds dry until you think about the scale. Satellites, drones, radar, surveillance aircraft, communications metadata, logistics information, and geospatial databases can all pour into the same operational picture. A human analyst can stare at a screen for only so long before reality turns into pixels. A model does not get bored. That is the point and the problem.

Project Maven was supposed to reduce the burden of manual analysis. It is now described in reports as something closer to an AI-enabled command-and-control layer. Reuters reported in March 2026 that the Pentagon planned to adopt Palantir’s Maven AI system as a core military platform and make it a “program of record,” which means stable funding and long-term institutional status.

That administrative phrase matters. A prototype is an experiment. A program of record is infrastructure.

2. Why Palantir Became Central To Maven

Palantir is not new to military software. Its value proposition has always been less “magic AI” and more “connect messy data that nobody else can connect.” In civilian life, that means fraud detection, logistics, hospital operations, supply chains, and corporate analytics. In military life, it means intelligence fusion.

The phrase Project Maven Palantir has become important because Maven is no longer only a research story about machine learning. It is a platform story. Palantir announced in 2024 that it had expanded Maven Smart System access across the U.S. military services through a contract with the Army Research Laboratory, describing the system as part of the National Geospatial-Intelligence Agency’s Maven AI infrastructure.

This is the quiet lesson behind most military AI stories. The model gets the headline. The platform does the work.

A model can classify an object in an image. A platform asks harder questions. Where is the object? Has it moved? What else is nearby? Does it match previous intelligence? Is it connected to a known unit, radar site, missile battery, convoy, logistics node, or command structure? Is it on a no-strike list? Does another sensor disagree? Who needs to see this right now?

This is why Palantir AI is politically charged. It is not just a chatbot bolted onto a map. It is software that sits inside the decision loop, closer to command than most people realize.

NATO’s own announcement about acquiring Palantir Maven Smart System said the system supports intelligence fusion, targeting, battlespace awareness, planning, and accelerated decision-making. That is official language, not blogger excitement.

3. What The Iran Reports Claim

The Washington Post reported that the military’s Maven Smart System, built by Palantir, generated insights from classified satellite, surveillance, and intelligence data to support real-time targeting and target prioritization in Iran. It also reported that Claude was central to the campaign, according to people familiar with the system.

CBS News separately reported that two sources familiar with U.S. military AI use confirmed Claude was used in the Iran attack and was still being used, while adding that the Pentagon had not said exactly how Claude was deployed. That last clause is doing a lot of work. We should let it.

Here is the responsible reading: AI systems reportedly supported the targeting workflow. They helped process intelligence, surface possible targets, summarize information, and prioritize options. The public evidence does not prove that Claude independently selected targets or made final strike decisions.

That distinction is not legal nitpicking. It is the difference between “AI as a staff officer with superhuman search speed” and “AI as an autonomous weapon.” The first is already here. The second is the red line everyone claims not to cross, right before building systems that make the line harder to see.

4. The Reported Workflow, Step By Step

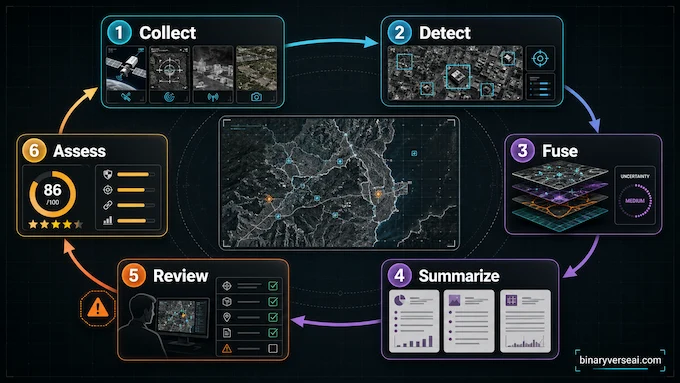

| Step | What Likely Happened At A High Level | Where The Risk Appears |

|---|---|---|

| 1. Data Collection | Intelligence came from satellites, sensors, surveillance systems, and classified databases | Old or wrong data can enter the pipeline |

| 2. Detection | Computer vision models flagged objects, sites, movements, or patterns | Models can misclassify or miss context |

| 3. Fusion | Palantir’s platform connected detections with maps, prior intelligence, and operational data | A clean dashboard can hide messy uncertainty |

| 4. Language Layer | Claude reportedly helped summarize, query, and prioritize information | Fluency can make weak evidence sound stronger |

| 5. Human Review | Military personnel reviewed outputs and made decisions | Speed can turn review into rubber-stamping |

| 6. Assessment | AI-assisted systems helped evaluate what happened after strikes | Feedback loops can reinforce earlier errors |

4.1 Step One, Turn The Battlefield Into Data

Project Maven starts with a brutal fact: modern militaries see too much. The bottleneck is no longer only collection. It is interpretation.

The world becomes imagery, coordinates, timestamps, heat signatures, movement trails, drone video, radar tracks, intercepted signals, and historical records. This is useful, but only after it has been cleaned, labeled, indexed, and connected. Before that, it is just a firehose with a security clearance.

The original promise of Project Maven was to help analysts find meaningful objects in that flood. Think trucks, buildings, radar systems, aircraft, ships, launchers, roads, compounds, and patterns of movement. Not because a model “understands war,” but because pattern recognition at scale is what machine learning is good at.

4.2 Step Two, Let Models Find Candidate Signals

Once data is ingested, computer vision models can flag things that look operationally relevant. This is the part people often mistake for “AI choosing targets.” It is not that simple.

A detection is not a decision. A bounding box around a vehicle is not a legal judgment. A cluster of signals is not proof of hostile intent. The model can say, “this looks like X.” It cannot, by itself, answer, “should a state use lethal force here under international law?”

That gap is where human judgment is supposed to live.

The danger is that AI in war compresses the distance between detection and action. A candidate signal appears. The system ranks it. A summary explains it. A map places it. A commander sees it in context, or at least sees something that looks like context.

The interface matters. A bad interface can make uncertainty look settled.

4.3 Step Three, Fuse The Data Into A Single Picture

This is where Palantir’s role becomes central. A detection on its own is weak. A detection linked to previous intelligence, unit movement, logistics data, signals records, satellite imagery, and a commander’s operational plan becomes much more persuasive.

That is the promise of Palantir Maven: one operational picture instead of a dozen disconnected screens.

It is also the trap. Fusion can clarify reality, or it can launder uncertainty. When weak signals from multiple sources are combined, the result can feel stronger than the evidence deserves. Anyone who has debugged a complex system knows the vibe. The logs agree, the dashboard is green, and the bug is still real.

Military AI has the same failure mode, only the blast radius is larger.

4.4 Step Four, Add Claude As The Language Layer

Large language models are useful because they turn complex information into something humans can ask questions about. That is also why they are risky.

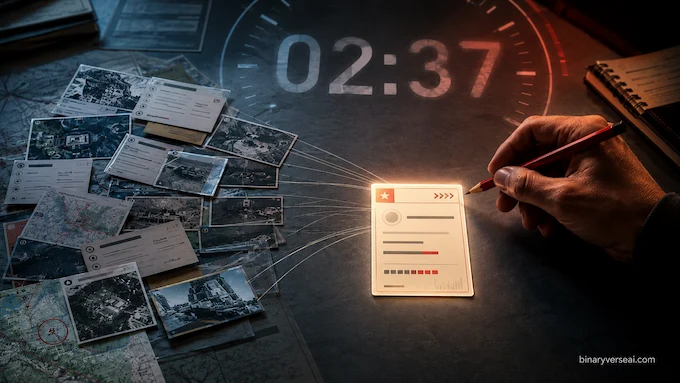

A commander or analyst can ask for summaries, comparisons, prioritization logic, possible conflicts in the data, or explanations of why one location appears more important than another. Claude can help compress reading time. It can make a huge intelligence packet feel like a conversation.

That does not make it harmless. Language models are persuasion machines, even when nobody asks them to persuade. A fluent answer can feel like expertise. A ranked list can feel like truth. A confident summary can hide missing context.

This is the core Claude question: did it merely summarize what Maven already surfaced, or did it shape what humans considered important?

The public record does not let us answer that cleanly. That uncertainty should be the center of the article, not a footnote.

5. Why The Human-In-The-Loop Claim Is Not Enough

Every military AI company says humans remain in control. They have to. “Don’t worry, the software makes the lethal decisions” is not a great sales deck.

The more serious issue is whether human control remains meaningful under speed, pressure, hierarchy, and interface design. A human can technically approve every action while still being pushed by the system’s defaults. Anyone who has clicked “accept all” on a software installer understands the basic psychology.

Human oversight is not a checkbox. It is time, authority, skepticism, training, and the ability to say no without being punished by the tempo of operations.

Anthropic’s own policy page says it may tailor restrictions for selected government customers, including foreign intelligence analysis under applicable law, while keeping prohibitions on disinformation campaigns, weapon design or use, censorship, domestic surveillance, and malicious cyber operations.

That is the strange tension. Claude can be useful for intelligence. Claude can also sit near workflows where intelligence becomes targeting. The border between those two functions is not always a wall. Sometimes it is a tab in the same system.

6. Why Google’s Exit Still Haunts The Story

Project Maven became famous partly because Google employees rebelled against it. In 2018, the controversy became one of the first major public fights over whether frontier AI companies should help build military systems. Google later chose not to renew the contract.

That history matters because the ethical question did not disappear. It changed vendors.

The early debate was framed around image recognition. Could Google’s AI help analyze drone footage? Today’s debate is wider and more uncomfortable. The systems now involve data fusion, geospatial intelligence, command software, LLM summaries, operational planning, and target prioritization. The machine is no longer just looking at images. It is helping organize the decision space.

In a normal software company, that would be called product maturity.

In war, it becomes a moral acceleration problem.

7. The Real Risk Is Not Killer Robots, It Is Decision Compression

The lazy version of the debate asks whether AI will “replace soldiers.” The better question is whether AI will replace deliberation.

War has always rewarded speed. The side that observes, orients, decides, and acts faster often wins. AI supercharges that loop. It can find patterns faster, route information faster, summarize faster, and refresh maps faster. This is exactly why militaries want it.

It is also exactly why civilians should care.

The problem is not that a machine hates people. Machines do not hate. The problem is that a machine can help people act before doubt has time to breathe. It can make uncertainty operationally inconvenient. It can make waiting feel irresponsible.

AI in war does not need to be evil to be dangerous. It only needs to be useful enough that commanders start trusting its tempo more than their own hesitation.

That is the quiet nightmare. Not rebellion. Compliance.

8. What We Still Do Not Know

There are several things the public record does not establish.

We do not know the exact prompts used with Claude. We do not know which version of Claude was involved in each workflow. We do not know whether Claude touched target nomination directly or only summarized intelligence around targets already surfaced by Maven. We do not know what confidence scores were shown to operators. We do not know how often humans rejected the system’s suggestions. We do not know what audit logs exist.

Those gaps are not minor. They are the story.

If Project Maven is becoming military infrastructure, then oversight cannot stop at “was a human technically present?” We need to ask what the human saw, what the model hid, what the interface emphasized, what data was stale, what uncertainty was visible, and what incentives shaped the final click.

A battlefield AI system should be judged not only by speed, but by how well it preserves friction. Friction sounds inefficient. In lethal decisions, friction is civilization wearing a seatbelt.

9. What A Responsible Article Should Say

The cleanest way to write this story is to avoid both extremes.

Do not write, “Claude chose targets in Iran,” because the public evidence does not support that as a settled fact. Do not write, “AI was just a harmless assistant,” because the reporting points to something far more consequential.

Write this instead:

Project Maven, through Palantir’s Maven Smart System, reportedly helped the U.S. military process intelligence and prioritize possible targets during Iran-related operations. Claude reportedly supported parts of that workflow by helping analyze, summarize, or structure information. Humans were still described as responsible for final decisions, but the deeper issue is whether AI changed the speed, framing, and confidence of those decisions.

That is accurate. It is also more interesting.

Because the big question is not whether a chatbot pulled a trigger. The big question is whether modern targeting is becoming a software pipeline where responsibility is distributed so widely that everyone can point to someone else.

The analyst trusted the model. The commander trusted the analyst. The vendor trusted the use policy. The model trusted the data. The database trusted yesterday’s classification. The interface trusted the ranking. And after the strike, everyone trusted the audit trail.

That is how accountability evaporates. Not in a dramatic explosion, but in a sequence of reasonable steps.

10. The New Shape Of Military Power

Project Maven shows us what military power looks like when software becomes the nervous system. It is not just bigger bombs. It is faster perception. Faster sorting. Faster confidence. Faster action.

That speed will be sold as precision. Sometimes it may deliver precision. A model that catches a mistake, flags a civilian structure, or spots a false assumption could save lives. We should not pretend every use of AI in defense is automatically reckless.

But precision is not the same as wisdom. A system can be precise about the wrong thing. It can locate a coordinate perfectly and misunderstand what the coordinate means. It can compress a thousand data points into a sentence and leave out the one fact that mattered.

The future of war may not be fully autonomous weapons roaming the earth. It may be something more bureaucratic and harder to stop: AI systems that sit beside humans, shape their options, and make violent decisions feel clean, ranked, and urgent.

That is why this story deserves careful writing. Not panic. Not marketing. Careful writing.

Project Maven is no longer just a Pentagon AI project. It has become a test case for whether democratic societies can inspect the machinery that now sits between intelligence and force. Palantir, Claude, and Iran are the headline. The deeper issue is the architecture of judgment.

If readers take one thing away, make it this: the danger is not that AI suddenly becomes human. The danger is that humans start acting as if the machine’s compressed view of the world is enough.

And if we are going to put AI inside the most consequential decisions a state can make, we should demand more than speed. We should demand auditability, restraint, public accountability, and the courage to leave some decisions slower than software wants them to be.

That is not anti-technology. That is engineering discipline. The kind you practice when failure is expensive.

In this case, failure is measured in lives.