Introduction

ResNet’s big trick is almost insultingly simple. Add an identity shortcut, stop gradients from dying, train deeper models. The shortcut is a safety rail, and in deep residual learning for image recognition it turned “very deep” from a heroic gamble into a default option.

That safety rail also locks you into a specific kind of motion. If every layer is “state plus increment,” you get dynamics that tend to accumulate, not revise. The DDL paper calls this a strictly additive inductive bias, tied to the identity shortcut.

Deep delta learning keeps the stability, then upgrades the shortcut into something you can steer. The shortcut becomes a learnable geometric operator, a rank-1 perturbation of identity, driven by a direction vector k(X) and a scalar gate β(X). The fun part is that β can smoothly move the shortcut between identity, projection, and reflection.

Let’s unpack it, with enough math to be precise and enough intuition to be useful.

Table of Contents

1. Deep Delta Learning In 60 Seconds

In classic ResNet, the residual connection is fixed and additive. In deep delta learning, the shortcut itself becomes learnable, but only in a very constrained way: one direction, one gate, rank-1 geometry.

If you only remember one sentence, make it this: deep delta learning turns the shortcut from “always pass through” into “pass through, erase, or flip a chosen component, then write a new one.”

- Question: What is the shortcut?

- ResNet Shortcut: Identity (or fixed projection)

- deep delta learning Shortcut: Identity plus a rank-1 Delta Operator

- Question: What does β do?

- ResNet Shortcut: Often only scales the residual branch

- deep delta learning Shortcut: Controls both erasing old content and writing new content

- Question: Can it model “opposing” dynamics?

- ResNet Shortcut: Harder

- deep delta learning Shortcut: Yes, reflection can introduce a negative eigenvalue

- Question: Why does it stay stable?

- ResNet Shortcut: Identity path is well behaved

- deep delta learning Shortcut: β can go to 0, giving an effective skip

2. Quick Refresher: What A ResNet Residual Block Is

A residual block wraps two things:

- A transform branch F(x), the workhorse layers.

- A skip connection, a bypass path, usually identity.

The output is x + F(x) (ignoring details like pre-activation). In resnet, that simple addition is the whole stability hack. That’s the whole “skip connection vs residual block” story in one line: the skip connection is the bypass, the residual block is the bypass plus the transform.

Why does this matter for deep delta learning? Because the DDL change is not “make F(x) bigger.” It changes what the bypass is allowed to do.

The DDL authors frame ResNet’s update as a forward Euler step, with a fixed identity Jacobian on the shortcut. That’s a clean mental model, and it also highlights the limitation: the shortcut can only add, never revise.

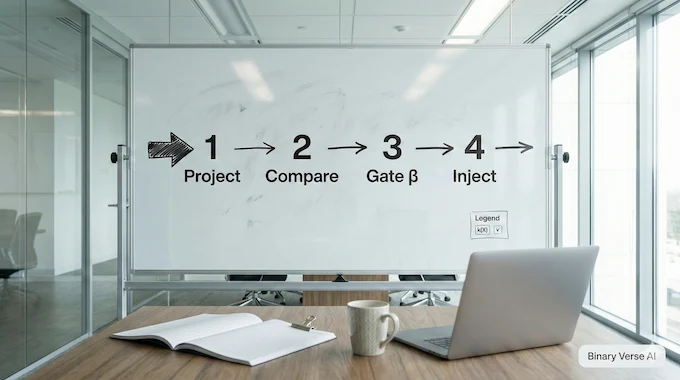

3. The Delta Residual Block: Project, Compare, Gate, Inject

The ddl residual block is described as four ordered operations:

- Project: compute kᵀX

- Compare: vᵀ minus that projection

- Gate: scale by β

- Inject: write back along k

This reads like a tiny editing loop:

- “What do I currently store along direction k?”

- “What value do I want there instead?”

- “How hard should I apply the correction?”

- “Write the correction back into the state.”

Nothing mystical. It’s a controlled, one-direction rewrite inside what used to be a passive shortcut.

4. What’s Actually New: The Shortcut Becomes A Learnable Rank-1 Operator

Here is the core math move.

The shortcut applies a Delta Operator A(X) = I − β(X)k(X)k(X)ᵀ, then the block injects βkvᵀ. Under unit-norm k, the update can be rewritten as:

X_{l+1} = X_l + β k (vᵀ − kᵀX_l).

That single line explains the whole architecture:

- kᵀX is the “current content” along k (erase term).

- vᵀ is the “desired content” (write term).

- β is the step size that links erasing and writing.

So deep delta learning does not generalize ResNet by adding more parameters everywhere. It generalizes the shortcut’s geometry in a surgical way.

A surprisingly helpful sanity check is the “scalar value” limit. If you set the value dimension dᵥ to 1, the matrix state collapses to a normal feature vector, and the update becomes x_{l+1} = x_l + β (v − kᵀx_l) k. That looks like a familiar gated correction, except the coefficient depends on the mismatch between the proposed write value and what the state already contains along k. In other words, DDL contains a standard “vector net” as a special case, and the matrix view simply repeats the same correction across multiple value columns, with shared geometry and shared gating.

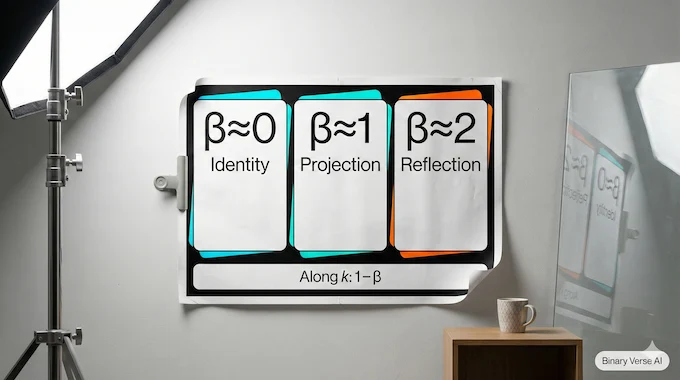

5. β Explained: Identity, Projection, Reflection

The spectrum story is unusually crisp. For A = I − βkkᵀ, everything orthogonal to k has eigenvalue 1, and k itself has eigenvalue (1 − β).

So β is a geometry knob.

- β Regime: β ≈ 0

- Eigenvalue Along k: 1

- Shortcut Action: Identity

- Quick Intuition: Layer becomes a no-op, great for depth

- β Regime: β ≈ 1

- Eigenvalue Along k: 0

- Shortcut Action: Projection onto k⊥

- Quick Intuition: Delete the k component, then rewrite it

- β Regime: β ≈ 2

- Eigenvalue Along k: −1

- Shortcut Action: Householder reflection

- Quick Intuition: Flip sign along k, preserve norms

A nice extra detail is what happens to volume. The determinant along the spatial features is (1 − β), and because the shortcut broadcasts across dᵥ columns, the determinant on the full lifted state becomes (1 − β)^{dᵥ}. That means β is not just “some gate,” it literally controls whether the shortcut preserves orientation or flips it, and for odd dᵥ a reflection changes the global orientation of the lifted space.

That reflection regime is the headline. Negative eigenvalues let a model represent “oppositional” transitions, not just accumulation. The paper motivates this directly, pointing to the need for more flexible transitions that include negative eigenvalues.

They also keep β in [0, 2] using a scaled sigmoid, precisely to preserve these interpretations.

6. DDL As “Erase + Write”: The Delta Rule Over Depth

Here’s why the name sticks.

The delta rule in deep learning is the structure “new minus old,” scaled by a step size, used in fast associative memories and linear attention. The DDL paper says their expansion “exactly recovers the Delta Rule update,” with explicit Write and Erase terms.

When you look at X_{l+1} = X_l + β k (vᵀ − kᵀX_l), it’s obvious. It’s a one-direction delta update, repeated over depth, and the same β synchronizes the destructive and constructive parts of the move.

Quick disambiguation: reinforcement learning also uses “delta,” usually as a TD error. That’s not what we mean here. In deep delta learning, “delta” is the correction signal inside the block, not a learning signal from rewards.

The practical payoff is simple. deep delta learning gives residual stacks a built-in way to revise state, not just pile onto it. If you’ve ever stared at a deep model and felt it was carrying stale features like emotional baggage, this is the kind of mechanism that can clean house.

7. Relation To DeltaNet And Fast-Weight Memories: Same Recurrence, Different Axis

If you’ve read DeltaNet, you’ve seen a memory matrix updated by a rank-1 rule over time. The DDL paper puts the DeltaNet recurrence next to the DDL depth update and calls DDL the depth-wise isomorphism.

The correspondence is clean:

- DeltaNet’s memory state maps to DDL’s activation state.

- Both use a rank-1 Householder-style operator to erase or reflect a subspace component.

- β is interpreted as a layer-wise step size, coupled to both erase and write so the update stays coherent.

This is a big part of why deep delta learning feels timely. It connects two worlds that often talk past each other, residual CNN intuition and fast-memory sequence modeling.

8. How DDL Differs From LSTM, Highway Networks, And Invertible ResNets

Different gating, different intent.

8.1 LSTM

LSTM gates are element-wise and tied to a fixed memory cell structure. DDL gates a geometric operator on the shortcut, and it learns an erase direction k per input. It’s closer to “state geometry control” than “cell state bookkeeping.”

8.2 Highway Networks

Highway Networks gate between identity and transform paths. DDL’s gate changes the shortcut transform itself, letting it become projection or reflection, not just “more or less identity.”

8.3 Invertible ResNets

i-ResNets aim for invertibility through constraints. DDL does not force global invertibility. It can be invertible when 1 − β ≠ 0, orthogonal at β = 2, and intentionally projective near β = 1 for controlled forgetting.

That last clause matters. Sometimes forgetting is the point.

9. Practical Costs: Activation Memory, Value Dimension dᵥ, And Where Compute Goes

The shortcut math is cheap. It’s rank-1, mostly projections and outer products, so it scales like O(d·dᵥ), not O(d²·dᵥ).

The cost center is the state shape and the side branches. DDL often represents activations as X ∈ R^{d×dᵥ}. If dᵥ is large, activation memory balloons. If the k, v, β generators are heavy, the block stops being a shortcut upgrade and becomes a whole new backbone.

There’s also a subtle “mixing” story that shows up even in toy cases. The paper analyzes a diagonal input matrix and shows that applying A introduces off-diagonal terms proportional to −β λ_j k_i k_j. Translation: even if your incoming features are perfectly decoupled, a non-zero β induces controlled coupling, based on how coherent the k direction is across feature modes. That’s another way to see why this is not just a gate, it’s a geometric intervention that can couple features in a controlled way, without paying for a full dense mixing matrix.

The paper’s parameterization choices signal the intended engineering posture: normalize k for stability, and generate β with a lightweight bounded head in [0, 2]. Keep that spirit and deep delta learning stays a scalpel, not a sledgehammer.

10. Can It Be Faster Or Parallelizable: What’s Plausible

Formally, you can write the layer recurrence as X_{l+1} = A_l X_l + B_l, where A_l is identity minus a rank-1 term. That shape is the kind of thing associative scan methods sometimes exploit.

The catch is dependence. If k(X_l) and β(X_l) are computed from the current state, you still have a sequential chain. So the first wins are likely boring and valuable: fused kernels for projection and outer product, good memory layout for d×dᵥ, and careful mixed-precision handling.

If you want a litmus test, look for two numbers in an implementation report: peak activation memory and achieved bandwidth. deep delta learning lives or dies on memory movement long before it runs out of FLOPs.

A true “parallel depth scan” would be a separate research result, and it would probably require constraints on how k and β are produced.

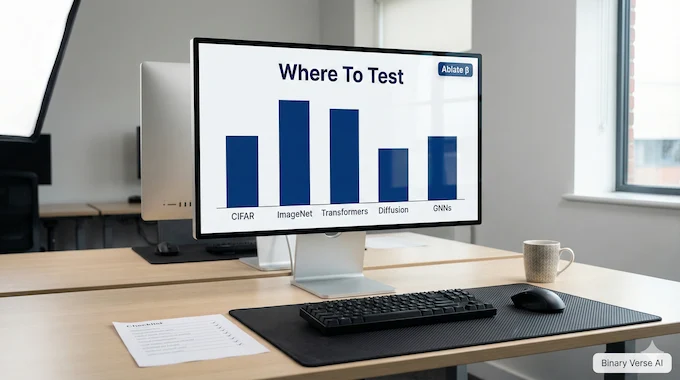

11. Where The Experiments Should Land: A Benchmark Checklist

Until strong results land, treat deep delta learning like an interesting mechanism that needs the usual validation. Here’s the checklist that answers most real objections:

- Swap into ResNet backbones on CIFAR and ImageNet. Match compute, match training recipe.

- Try Transformer residual streams, watch stability and convergence.

- Try diffusion denoisers, check for over-erasing when β trends toward 1.

- Try deep GNNs, measure over-smoothing and signal decay.

Then the ablations that isolate the mechanism:

- Fix β to constants (0, 1, 2), and report the gap.

- Restrict β ∈ [0, 1] to remove reflection, compare.

- Vary dᵥ and report accuracy per parameter and per activation memory.

- Log β distributions across depth and across inputs.

If deep delta learning is real, you’ll see it in these plots, not in a single headline number.

12. Implementation Notes And Common Pitfalls

If you implement deep delta learning, the main pitfalls are predictable and avoidable.

12.1 Normalize k(X)

The paper enforces L2 normalization of k (with an epsilon) to satisfy the spectral assumptions. Skip this and β stops meaning what you think it means.

12.2 Bound β And Initialize Near Identity

They parameterize β in [0, 2] with a scaled sigmoid. That’s not decoration, it protects the geometry. Also, start near the identity regime so early training behaves like a stable ResNet. The identity behavior when β → 0 is explicitly highlighted as crucial for depth.

12.3 Instrument The Gate

Log β histograms. If it collapses to 0, you built an expensive skip. If it saturates at 2, you built a reflection factory. The interesting models use the whole range.

Conclusion

ResNet made depth trainable. deep delta learning makes depth editable.

If you’re building models right now, here’s the practical next step: take a backbone you already trust, drop in a ddl residual block, and run the ablations. Then publish the boring plots, the β histograms, the speed numbers, the failure cases.

That’s the fastest way to turn deep delta learning from a neat geometric idea into an engineering primitive.

What is deep delta learning?

Deep delta learning is a ResNet-style architecture that upgrades the shortcut path into a learnable rank-1 geometric operator. Instead of always passing identity through the skip, it can selectively erase and rewrite features using a direction vector k(X) and a scalar gate β(X).

What is the delta rule in a learning algorithm?

The delta rule is an error-correction update that adjusts something by the difference between a target and the current value, scaled by a step size. In deep delta learning, the residual update can be viewed as “erase + write” over depth, where β acts like a learnable step size.

What is the structure of a residual block?

A standard residual block is “input + learned transform,” usually written as x + F(x). Deep delta learning keeps the residual block structure but changes the shortcut, turning it from fixed identity into a learnable rank-1 shortcut transform controlled by k(X) and β(X).

What is the difference between skip connection and residual block?

A skip connection is the shortcut path that bypasses the main transform. A residual block is the full module: shortcut plus transform branch(es) plus the merge operation. Deep delta learning’s key idea is upgrading the skip connection itself into a data-dependent operator.

What is a residual connection and why does it work?

A residual connection provides an easy path for gradients and signals to flow, which makes deep networks trainable and stable. Deep delta learning keeps that stability goal, but adds a controlled way for the shortcut to project or reflect along a learned direction, rather than always behaving like identity.