People ask Is X biased the way they ask if the weather is “angry.” It’s a vibe question, born from scrolling a timeline that feels curated, loud, and somehow always trying to start an argument.

The frustrating part is that “bias” gets debated like a moral verdict. In systems terms, it’s simpler. The x algorithm is a ranking machine. Ranking machines pick winners. Winners shape what users see. What users see shapes what they believe is normal, urgent, or true.

For years, the best experiments we had were awkward. A major Meta collaboration study during the 2020 US election replaced algorithmic feeds with chronological ones and found no detectable movement in political attitudes, even though content and engagement changed. So when someone asked Is X biased, platforms could shrug and say “the algorithm doesn’t move opinions.”

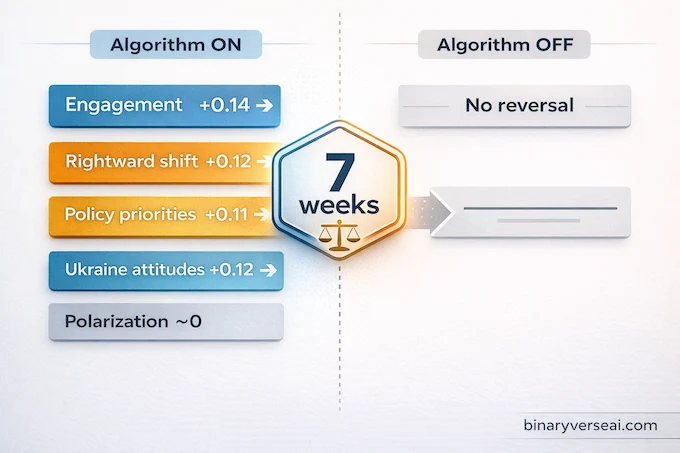

A 2023 field experiment on X broke that stalemate. Researchers randomized active US users into either the algorithmic “For you” feed or the chronological “Following” feed for seven weeks, then measured attitudes and behavior. Switching the algorithm on increased engagement and shifted several political attitudes in a more conservative direction. Switching it off did not produce comparable reversals.

So yes, Is X biased is finally a question we can answer with data, not vibes. If you’re interested in how AI and politics intersect through algorithmic bias, this study is a landmark starting point.

Table of Contents

1. The Results, Fast And Concrete

If you only remember one thing, remember this: turning the algorithm on moved opinions, turning it off didn’t move them back.

That asymmetry matters because it changes what “fixing your feed” even means. If you’re asking Is X biased because your timeline feels tilted, you can’t assume a settings toggle resets the system.

| Outcome | Effect | What changed |

|---|---|---|

| Engagement index | +0.14 s.d. | Users interacted more. |

| Conservative policy priorities | +0.11 s.d. | More emphasis on GOP-salient issues like inflation, immigration, crime. |

| Trump investigations “unacceptable” | +0.08 s.d. | Greater belief that investigations are illegitimate. |

| Pro-Kremlin Ukraine attitudes | +0.12 s.d. | Shift toward more Russia-friendly responses. |

| Aggregate policy/news index | +0.12 s.d. | Net rightward shift across related items. |

| Partisanship, affective polarization | ~0 | No detectable change over seven weeks. |

This is not “everyone converts.” It’s more like a subtle turn of the dial on which issues feel top of mind and which narratives feel plausible. If you’re still wondering Is X biased, keep reading, because the mechanism is the part that makes this result more than a headline. Understanding how AI misinformation spreads through chatbots and political persuasion adds useful context here.

2. How Does X Algorithm Work, In Practice

Here’s the simplest useful model: “Following” is bounded by your follow list, “For you” is bounded by incentives.

The paper leverages a 2023 feature that let users choose between a chronological feed, the “Following” tab, and an algorithmic feed, the “For you” tab. In “For you,” content was added from accounts you don’t follow and reordered relative to time order.

X’s own help docs describe “For you” as recommended posts based on signals like accounts you follow and topics you’re interested in, while “Following” shows posts only from accounts you follow.

So when someone asks Is X biased, they’re usually asking about what happens when the system is free to reach outside their network. For a deeper technical look at how recommendation systems rank content, see this breakdown of how the X algorithm works on GitHub.

One more practical point. Engagement rises when the algorithm is on. That’s the tell. Whatever the internal objective function is, it’s aligned with interaction.

3. The Study Setup You Can Trust

This wasn’t a lab demo. It was a field experiment on real users, done without platform cooperation.

3.1 Who Showed Up

YouGov contacted 13,265 panel members, screened for active X use, and ended with 6,043 pre-treatment surveys and 4,965 post-treatment surveys. The authors also note the response rate in a plain way, about 50.5% of eligible panel members completed both surveys.

It’s not a representative slice of the whole country, and it shouldn’t be. The point is to estimate the effect on active X users, because those are the people the x algorithm can actually influence.

3.2 Randomization And Measurement

Assignment to algorithmic vs chronological feed was randomized via YouGov. A custom Chrome extension captured the first 100 posts users saw under each feed setting, with 784 running it pre-treatment and 599 post-treatment. Compliance checks weren’t hand-wavy, observed compliance was about 89% among extension users and self-reported compliance about 85% overall.

They also scraped followed-account lists for users who shared handles, covering 2,387 participants, which matters for the mechanism we’ll get to.

If your instinct is to ask Is X biased but doubt any single dataset, this design is why the results land. Randomization plus behavioral data is hard to dismiss. This connects to broader questions around AI in scientific research and peer review, where methodological rigor increasingly determines credibility.

4. What The Algorithm Promoted, And Why That Matters

The content analysis is where the story becomes legible.

The algorithmic feed promoted political content and, within political content, conservative content more than liberal content. The paper reports conservative posts were 2.9 percentage points more likely to appear in the algorithmic feed, while liberal posts were up 1.0 point, and within political posts conservative content was 2.5 points more likely.

It also demoted traditional news outlets and promoted political activist accounts. Posts from news organizations appeared 15.5 percentage points less often in the algorithmic feed, while posts from political activists appeared 5.9 points more often. Entertainment rose too.

And the posts surfaced in the algorithmic feed were dramatically higher-engagement, with large increases in likes, reposts, and comments.

That mix is exactly why Is X biased feels like a daily question. “More activists, less news” changes the informational texture of the platform. It’s not just more politics. It’s a different kind of politics, optimized to perform. This dynamic mirrors patterns explored in AI writing vs human content loops.

| Category | Algorithmic vs chronological | Direction |

|---|---|---|

| Any political activist posts | +0.059 | Up |

| Conservative activist posts | +0.028 | Up |

| Any news outlet posts | −0.155 | Down |

| Entertainment posts | +0.091 | Up |

A quick nuance, because it’s easy to misread these numbers as “the algorithm loves conservatives.” Notice that traditional news is demoted for everyone, liberal and conservative news both drop. That suggests the more stable preference is not “left vs right,” it’s “institutional news vs everything else.”

That distinction matters. News outlets tend to compress events into vetted narratives, with editors, corrections, and reputational constraints. Activists tend to compress events into calls to action. Calls to action are usually better at producing replies. Replies are usually better at producing rank. The result can be politically lopsided even if the underlying selection pressure is simply, “show what gets people to react.”

If you’re thinking about the x algorithm as an engineer, this is where the risk lives. Optimizing for engagement is not neutral when the content ecosystem is not neutral. It turns your timeline into a tournament where high-arousal posts have a structural advantage.

If you define “bias” as systematic skew in what a user is exposed to, you can answer Is X biased with a straight face, the distribution is not neutral.

5. Why Switching It Off Doesn’t Undo The Shift

This is the mechanism most people miss, and it explains the one-way door.

Exposure to the algorithmic feed led users to follow more conservative accounts, especially conservative political activist accounts. The paper’s discussion is explicit: users keep following the accounts they engaged with, even after switching off the algorithm, which keeps the content stream similar enough to preserve the attitude shift.

This is where the question Is X biased becomes more about dynamics than intent. The algorithm doesn’t just rank posts. It reshapes your follow graph. The follow graph then persists and keeps feeding you the same worldview, even in a chronological mode.

So “turn it off” is not a reset. It’s more like turning off autocomplete after it already wrote half the sentence. This kind of persistent behavioral shaping is also what makes agentic AI tools and their frameworks so consequential once deployed.

6. Bias Without Conspiracy Theater

It’s tempting to treat Is X biased as a courtroom question. Guilty or innocent. That’s not how complex systems behave.

A useful engineer’s definition is: a system is biased if, holding users constant, it produces systematically different exposure patterns that correlate with an attribute, like ideology. This study shows that switching settings, and nothing else, changes exposure and shifts attitudes in a conservative direction.

That’s outcome bias, even if nobody designed “political bias” as a feature.

Also notice the boundary. Partisanship and affective polarization were null. Short exposure changes what feels salient. It doesn’t rewrite identity overnight.

This is also why political bias in social media is so slippery. A platform can be incentive-driven, not ideological, and still produce ideological skew, because human attention is not neutral and engagement rewards certain styles of speech. Researchers studying LLM safety and selective gradient masking grapple with analogous structural bias problems in AI systems.

7. Twitter Algorithm Bias, X Algorithm Change, And The Long Memory Of Feeds

A lot of people frame today’s feeds as a post-takeover anomaly, like the only story is “x algorithm change.” The paper offers a cleaner timeline.

The experiment ran in mid 2023, more than six months after Elon Musk acquired Twitter, a few months after X published source code, and shortly after Linda Yaccarino became CEO, but about a year before Musk publicly endorsed Trump in July 2024. That matters because it puts the study inside the Musk-era environment, but not inside peak election messaging.

The authors also point to earlier research from 2016, long before Musk, showing Twitter’s algorithm already prioritized right-wing content when algorithmic ranking was introduced. Read that twice. It suggests the phenomenon people label as “twitter algorithm bias” is not purely a leadership personality issue. It can be a stable property of how political content competes under engagement ranking.

In other words, even if the platform’s goals and policies shift, the underlying dynamics can stay the same. Activist content tends to be high-arousal and high-reply. News tends to be slower, more contextual, and less reactive. If you reward reaction, you get more reaction, and the political center of gravity can drift.

That drift is why Is X biased keeps resurfacing every time the platform tweaks the dial. Users feel the distributional shift long before anyone can explain it. This connects to how AI confessions and honesty in training LLMs reveal systemic tendencies baked into model objectives.

8. How To Reset X Algorithm, The Way That Actually Works

If you typed how to reset x algorithm, you’re probably not looking for a lecture. You want your timeline to stop yelling at you.

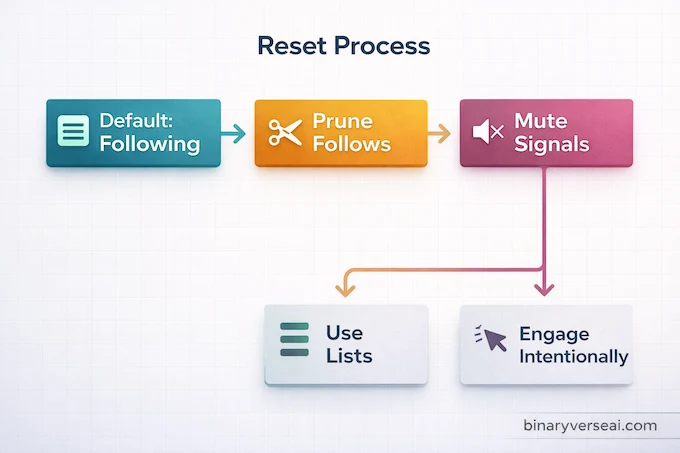

There is no single “factory reset” button for your social graph. What you can reset is the set of signals you feed into the system, and how much the system gets to decide for you.

8.1 Make “Following” Your Home Base

X separates “For you” and “Following,” with “Following” showing posts only from accounts you follow. If you’re asking Is X biased because “For you” feels warped, switching to “Following” reduces out-of-network injections immediately.

8.2 Prune The Follow Graph Like It’s Technical Debt

Because the experiment’s durable effect runs through who users end up following, the real reset is unfollowing and muting. If you don’t like what you see in chronological mode, it’s not the ranker anymore. It’s the graph.

Do a one-week cleanup:

- Unfollow accounts you only follow out of habit.

- Mute accounts you can’t unfollow.

- Build lists for “news,” “experts,” and “friends,” and browse those on purpose.

This is the most honest fix for Is X biased at the user level.

8.3 Remove Topic And Keyword Signals

X provides a way to manage interests and topics, including deselecting topics under “Interests” in “Your X data.” You can also mute words, phrases, hashtags, and usernames.

If you want to understand how does x algorithm work, start here. It works on signals. Delete the signals that keep dragging you back into the same rage loop. The same signal-driven logic underlies mechanistic interpretability research into LLM circuits, where small inputs produce outsized behavioral effects.

8.4 Train The Feed With Your Clicks, Not Your Complaints

From posts, X lets you take actions like unfollow or mute using the in-post menu. Use it. Then engage more with the creators you actually want more of.

There’s no magic button, but there is a reliable process. If you’re still asking Is X biased after doing this for a week, you’ll at least be asking from a cleaner baseline. For readers interested in how AI and productivity through agentic workflows are reshaping information environments more broadly, the same feed-training logic applies.

9. The Bottom Line

A good experiment doesn’t end an argument, it upgrades it.

This one upgrades Is X biased from a rhetorical grenade into an evidence-backed question. In 2023, moving users into X’s algorithmic feed increased engagement and shifted several attitudes toward more conservative positions, while switching out did not reverse those shifts. The mechanism runs through behavior, especially following conservative activist accounts that keep shaping exposure even after the algorithm is off.

If you build recommendation systems, don’t ignore the asymmetry. “Turn it off” experiments can miss lasting effects because the system already changed user choices.

If you’re a user, take the three-step reset: default to Following, prune your follows, and mute with intent. Then share this with someone still asking Is X biased like it’s a matter of opinion, because now it isn’t. For more AI research and analysis like this, visit BinaryVerseAI.

Does X (formerly Twitter) have a political bias?

Yes, the strongest current evidence says X’s algorithmic “For You” feed can produce a measurable conservative shift in users’ political attitudes. The key point is causality. In the Nature study, users assigned to the algorithmic feed changed in ways that users on a chronological feed did not. That means the debate is no longer just about vibes or user anecdotes. It is about measurable feed effects.

Did X become more conservative only because liberals left the platform?

No, that explanation is incomplete. User demographics and creator behavior may matter, but the Nature randomized trial was designed to separate platform composition from algorithmic effects. Users randomly assigned to the algorithmic feed shifted more conservative, while chronological-feed users did not show the same shift. That supports the claim that the feed system itself contributes to the outcome.

How does the X algorithm work to promote right-leaning content?

The X algorithm does not need an explicit rule like “boost conservatives” to create skewed outcomes. Recommendation systems rank content using engagement-related signals, network relationships, and interaction history. If outrage-heavy or activist-style political content performs better on those signals, the system can amplify it more often. The result can look like political bias even when the optimization target is engagement.

Why am I still seeing conservative posts after switching to the Following tab?

Because the algorithm can change who you follow, not just what you see in one session. The study found a persistent effect where exposure to the algorithmic feed increased follows of conservative political activist accounts. Once those accounts are in your following graph, your chronological feed can still contain that content. Switching tabs helps, but it does not undo the follow decisions already shaped by the feed.

How to reset X algorithm and fix a biased or rage-filled feed?

Start by switching to Following as your default viewing habit and stop engaging with rage-bait, including replies, quote-posts, and long dwell time. Then unfollow or mute accounts and topics that keep pulling your feed in that direction. Use muted words for recurring political triggers. Finally, rebuild your feed deliberately by following high-signal sources, lists, and topic-specific accounts you actually want to see. Resetting works best when behavior changes and account cleanup happen together.