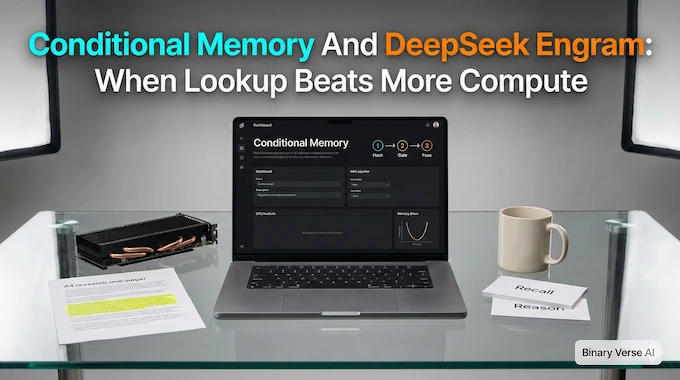

Conditional Memory And DeepSeek Engram: When Lookup Beats More Compute

Watch or Listen on YouTube Conditional Memory And DeepSeek Engram: When Lookup Beats More Compute Introduction Bigger models keep winning, but the reason is not always “more intelligence.” Sometimes it is just less wasted work. The Engram paper makes an almost irritatingly sensible point. Transformers do two jobs at once: they remember stable patterns, and … Read more