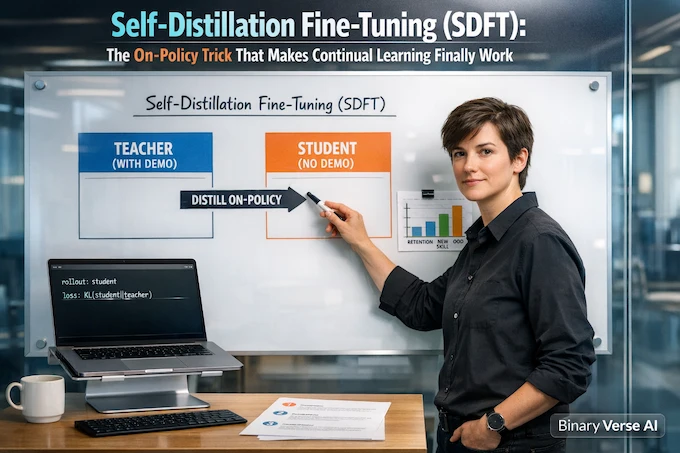

Self-Distillation Fine-Tuning (SDFT): The On-Policy Trick That Makes Continual Learning Finally Work

Self-Distillation Fine-Tuning (SDFT): The On-Policy Trick That Makes Continual Learning Finally Work Play Introduction Fine-tuning an LLM feels like doing surgery with oven mitts. You make one clean cut, the patient learns a shiny new skill, then you check the vitals and realize it forgot its own name. That is the default behavior of supervised … Read more